Intention Is All You Need

We spent twenty years telling everyone to learn to code. Now code writes itself. The question was never about code. It was about knowing what to build.

For two decades, “learn to code” was the safest career advice in the world. Now AI writes the code. But no one ever taught the harder skill: knowing what is worth building.

Consider what this looks like in practice. In January 2026, a three-person startup in Y Combinator’s latest cohort shipped a product in weeks that would have required a fifteen-person engineering team and six months two years earlier. Ninety-five percent of their codebase was AI-generated. The founders spent their days not writing code but arguing about what the product should do, which users to prioritize, whether the pricing model matched the value proposition. The code was the easy part. Knowing what to build was the job.

They were not unusual. By early 2026, the ground had shifted across the industry. Anthropic disclosed that its engineers now use Claude for roughly 60 percent of their work, up from 28 percent a year earlier, and that Claude Cowork, the company’s autonomous desktop assistant, was itself built with Claude Code in ten days. Not assisting engineers. Doing the engineering, while humans reviewed the output and decided whether to ship it.

The pattern extended far beyond one company. Anthropic released a legal plugin for Claude Cowork that automates contract review, NDA triage, and compliance workflows, tasks that once consumed most of a junior lawyer’s day. Microsoft had deployed more than 25 AI agents across its supply chain, with a target of over 100 by year’s end. McKinsey had 25,000 agents working alongside 40,000 employees, with a goal of reaching parity by year’s end.

These were not research demos. These were production systems, running at scale, in the real economy.

And yet for two decades, the answer to every question about economic displacement had been the same. Blue-collar jobs disappearing? Learn to code. Liberal arts degree not paying off? Learn to code. Worried about automation? Learn to code. Code was the safe harbor, the skill that would always be in demand, because software was eating the world and someone had to write it.

Now the software is writing itself.

The Vibe Shift

In February 2025, Andrej Karpathy, co-founder of OpenAI and former AI leader at Tesla, posted a casual description of how he’d been building software lately. He described what he wanted in plain English, accepted whatever the AI produced, and iterated by explaining what was wrong rather than fixing it. He never looked at the code. He called it “vibe coding”: you “fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

Within a year, vibe coding went from a throwaway coinage to a cultural phenomenon. Collins Dictionary named it their Word of the Year for 2025. By early 2026, 92 percent of US developers were using AI coding tools daily. Forty-one percent of all code written globally was AI-generated. Among Y Combinator’s Winter 2025 cohort, a quarter of startups had codebases that were 95 percent AI-generated. Cursor, an AI-native code editor, crossed $100 million in annual recurring revenue. The vibe coding market reached $4.7 billion. The adoption was global: Asia-Pacific led with over 40 percent of worldwide usage, suggesting this was not only a Silicon Valley phenomenon but a structural shift in how software gets made everywhere.

“This isn’t a fad,” Y Combinator CEO Garry Tan said. “This is the dominant way to code.” He was right. What he described as a revolution in coding was actually a revolution in what coding means. The hard part was no longer writing software. It was deciding what software to write.

Up the Stack

Scott H. Young, a writer and learning researcher with no professional software background, decided to build his own applications using vibe coding tools in late 2025. He made a spaced-repetition learning system, a Chinese video study guide, a watercolor painting planner. The AI handled all the implementation: scaffolding features, wiring integrations, generating tests. It worked.

But Young noticed something. The implementation difficulty had vanished. The conceptual difficulty had not.

When building his spaced-repetition tool, he needed to understand Zipf’s law, a statistical pattern governing how frequently words appear in natural language, along with cognitive science models of skill acquisition, and statistical methods for estimating learning rates to design the right algorithm. When he brought these ideas up, the AI followed his thinking and suggested helpful additions. When he didn’t, the AI followed along without flagging what was missing.

“Vibe coding has basically removed all of the actual implementation difficulty,” Young wrote, “but I’m still left with a lot of the conceptual difficulty of deciding what the behavior of the software should be.”

Professional engineers report the same experience at scale. Senior developers find that AI handles the implementation they once spent days on, leaving them to focus on architecture, system design, and the trade-offs between competing approaches. The code gets written faster. The decision of what code to write remains stubbornly human.

This is not new. Every wave of automation pushes human value up the same stack. Compilers eliminated assembly language. Spreadsheets eliminated manual calculation. Databases eliminated filing cabinets. CRM systems eliminated Rolodexes. Each time, the workers who adapted moved from execution to judgment: accountants became analysts, clerks became system architects, salespeople became relationship strategists. Vibe coding is the latest step, but it is the biggest one yet, because it doesn’t just eliminate a task. It collapses an entire layer of cognitive work: the translation from “what I want” to “code that does it.”

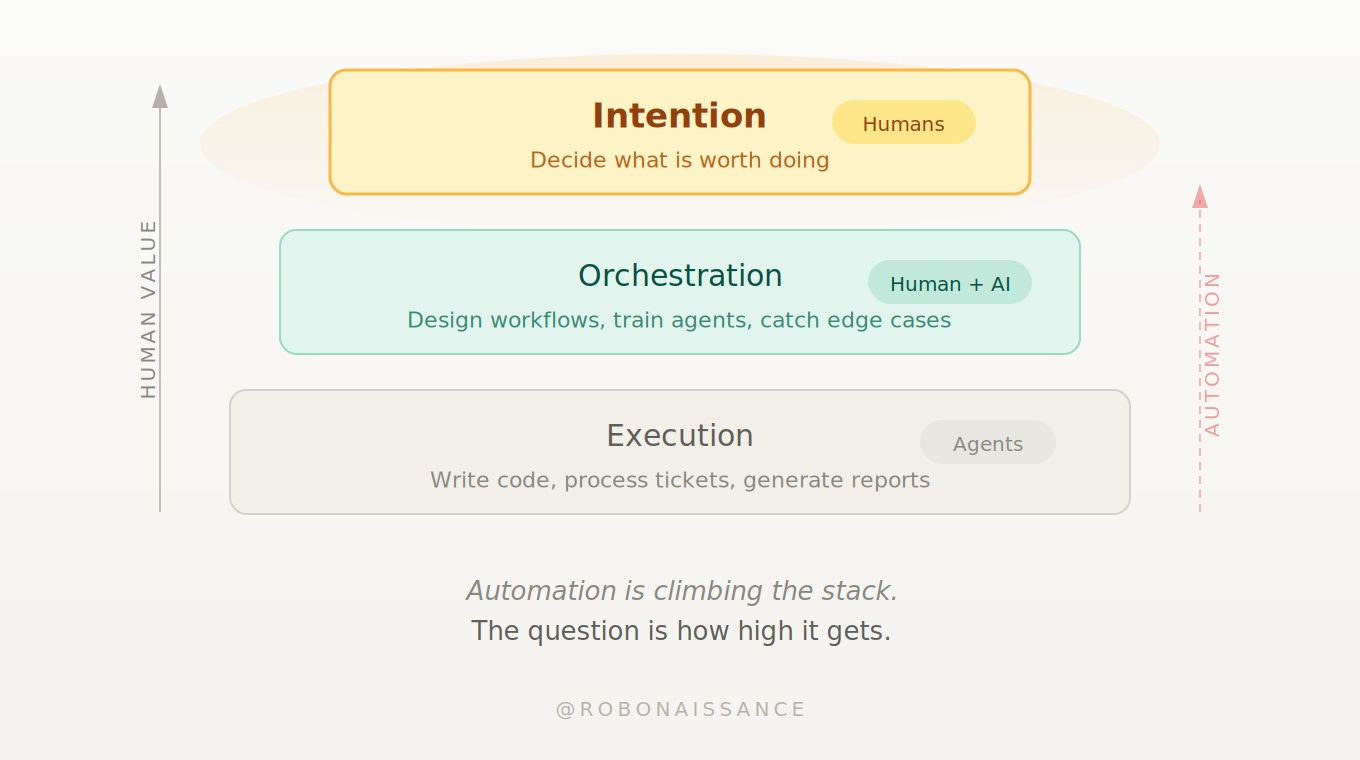

The result is a new economy with three layers. At the bottom, execution: agents write the code, process the tickets, generate the reports. In the middle, orchestration: humans design the workflows, train the agents, catch the edge cases. At the top, intention: someone decides what is worth doing in the first place. Automation is climbing the stack. The question is how high it gets.

What the Agents Can’t Want

The agents are already climbing.

Across the economy, AI agents are automating not just individual tasks but entire workflows. In a modern enterprise deployment, one agent collects requirements from a customer conversation. A second generates code. A third runs automated tests. A fourth manages the deployment pipeline. They maintain shared context and hand off work autonomously. No single agent replaces a person. The system replaces the workflow.

The same pattern extends to knowledge work. Deep research agents from Anthropic, OpenAI, and Perplexity can take a research question, autonomously decide which sources to consult, evaluate what they find, and synthesize a structured report, a process that once took an analyst days compressed into minutes. The agent handles the search strategy, the source evaluation, the synthesis. What it cannot do is decide which question is worth researching in the first place, or sense that the question everyone is asking is the wrong question entirely.

Gartner projects that 40 percent of enterprise applications will integrate task-specific AI agents by the end of 2026. Microsoft plans to equip every employee with AI support by year’s end. IBM’s Kate Blair, who leads the company’s agent infrastructure initiatives, summarized the moment: “2026 is when these patterns come out of the lab and into real life.”

This is intent-based computing. You state the desired outcome. Agents figure out how to deliver it. The name says it all. The human contribution is the intent. The era of paying for the privilege of doing your own work is ending. The next era charges for work done.

But intent means more than a prompt. A prompt is a surface expression. Intent is the judgment underneath it. It is knowing what problem is worth solving. It is sensing which customer need is real and which is noise. It is the product sense that tells a founder, three features into a roadmap, that the fourth feature is the one that will change the business. It is what Andy Zeng, co-founder of the robotics startup Generalist AI, calls “dark matter” in his own field: invisible, everywhere, responsible for most of what actually works.

Agents don’t have this. They execute what is asked of them with increasing competence. They do not ask whether the question is the right one. They have no stake in the outcome.

It is true that AI systems are learning to infer goals. Recommendation engines predict what you want. Training techniques like reinforcement learning from human feedback teach models to approximate human preferences by rewarding outputs that people rate highly. Future systems will get better at this. But inferring intent from data and forming intent from conviction are different things. One is pattern matching. The other is deciding that a pattern should exist in the first place. The gap may narrow. For now, and for any architecture currently imagined, it remains vast.

AI systems are already beginning to improve themselves: rewriting their own code, designing their own training runs, optimizing their own algorithms. But self-improvement is not self-direction. A model can lower its own test loss without knowing whether lower loss makes it more useful. It can optimize a benchmark without knowing whether the benchmark measures anything that matters. Recursive self-improvement accelerates execution. It does not replace the judgment of what to execute toward.

Attention, Then Intention

Intention does not make the transition painless. Most people who said “learn to code” never meant “learn to think about what to build.” They meant “get a safe job.” That job is no longer safe.

In 2017, a team of eight researchers at Google published a paper titled “Attention Is All You Need.” It introduced the Transformer architecture that would go on to power ChatGPT, Claude, Gemini, and nearly every major AI system built since. Attention was the mechanism: learnable, scalable, parallelizable. It told the model which parts of the input to focus on. It was the how.

Nine years later, attention has been extended from language into action. AI agents can now perceive data, reason about it, and execute complex workflows across the entire digital surface of our working lives. They write code, manage systems, triage legal disputes, handle customers, generate reports. The execution loop is closing.

What the loop does not contain, what it cannot contain, is the question of why it should run at all.

A coding agent does not know whether the software is worth building. A customer service agent does not know whether the product is worth selling. A financial agent does not know whether the company is worth fighting for.

Machines have attention. They increasingly have agency: the ability to perceive, decide, and act. What they do not have is intention: the capacity to decide that something matters. Not as a parameter to optimize but as a conviction to hold. Not as a prompt to follow but as a direction to set.

In a world increasingly populated by machines that can execute, the ability to decide what is worth executing becomes the scarcest and most valuable human capacity. Not scarce because it is computationally hard. Scarce because it requires something no current architecture provides: a point of view, a set of values, a stake in the future. You have to care about the answer to ask the question.

We spent twenty years telling people to learn to code. The better advice, it turns out, was always: learn what is worth building.

Attention is all the machines need. Intention is all we ever needed.

Loved this piece. The intention gap is one of the most underappreciated differences between humans and LLMs, and I think it maps cleanly onto comparative brain architecture. LLMs have something like a frontal lobe without the corticolimbic loop underneath it. No limbic input means no affective stake, no salience, no sense that anything actually matters. You can have all the executive function in the world, but without the subcortical substrate that makes things matter, intention is structurally unavailable.