No More Demos

The robotics industry has spent a decade making the most impressive videos in the history of technology. Sunday just raised $165 million to stop.

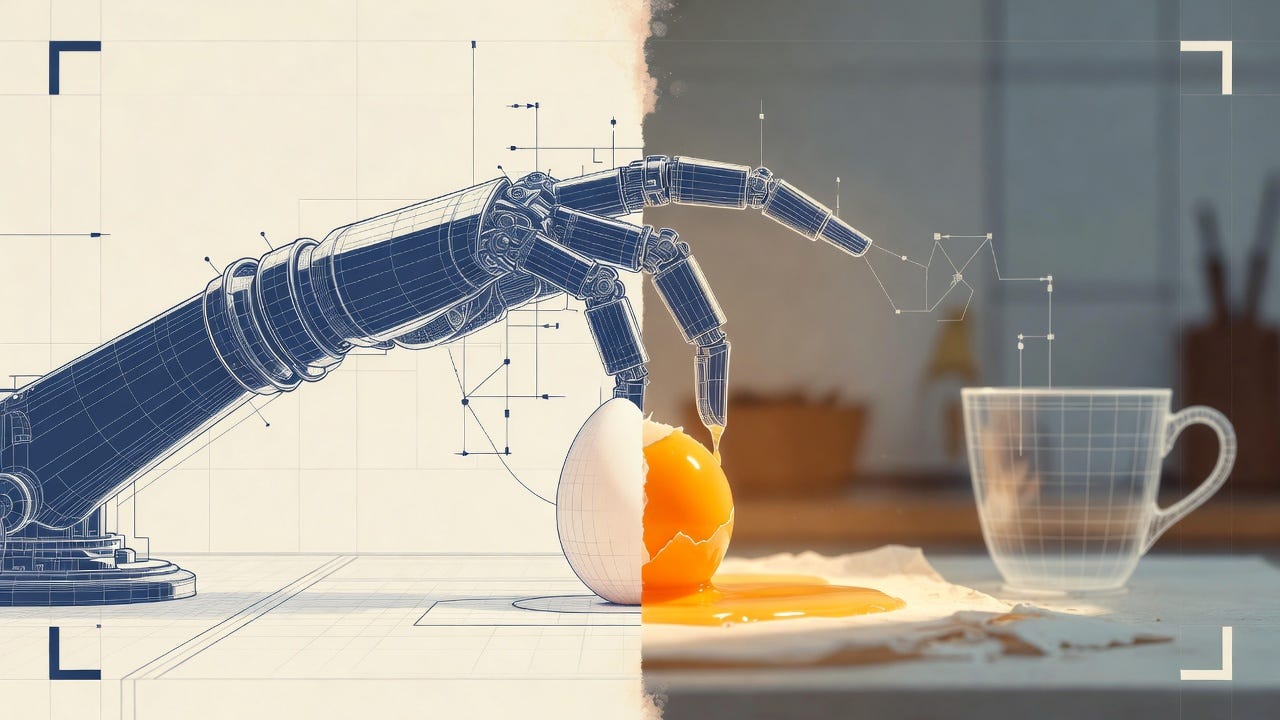

The egg does not break.

That is the thing you notice in every robotics demo. The robot reaches across the counter, its fingers close around the egg with something that looks like care, and it does not break. The crowd exhales. The video ends. The company posts it online, and for a day or two, the comment sections fill with people writing some variation o…