Roads to a Universal World Model, Part 5: The Architect’s Road

The representation path: understanding before action

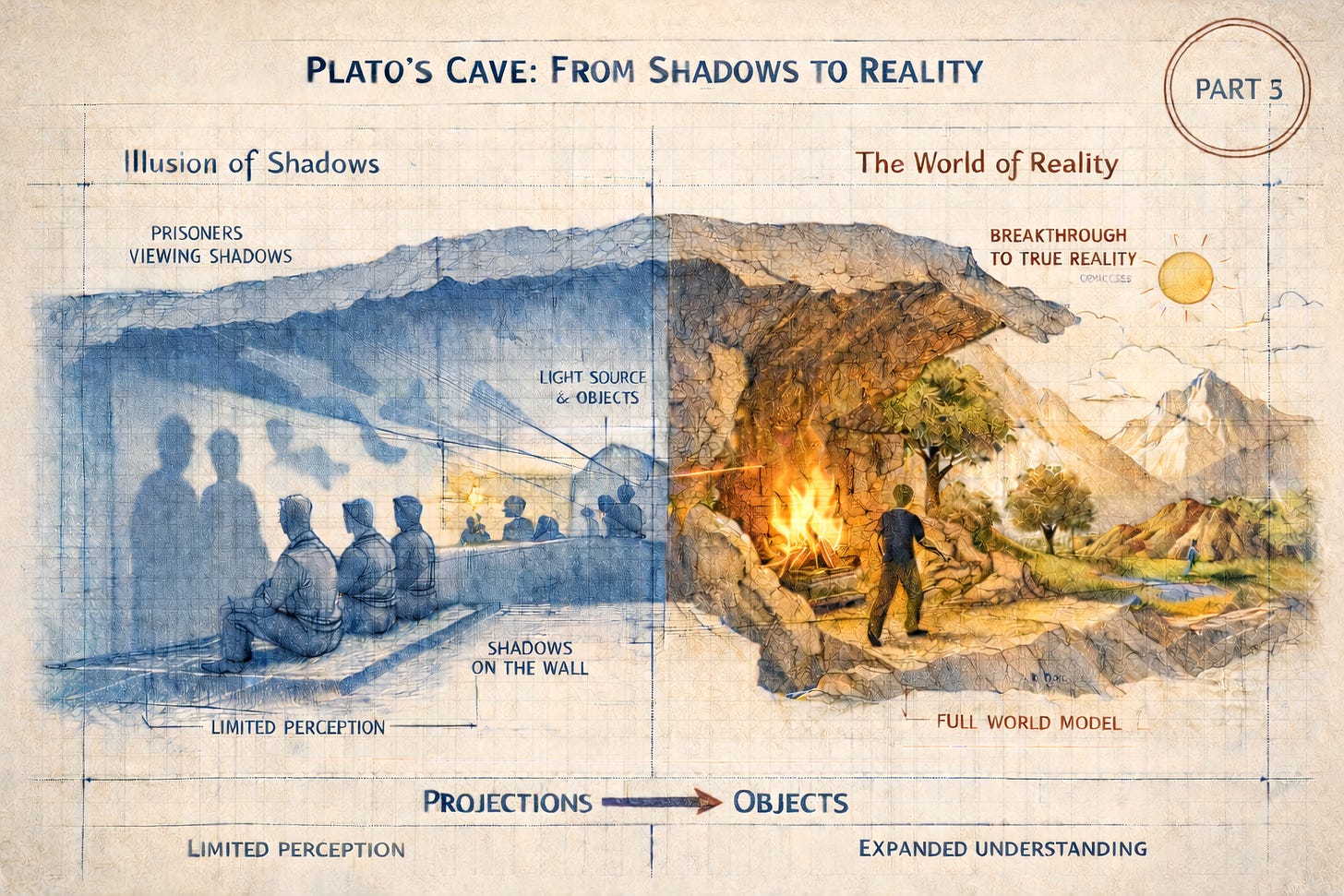

"How could they see anything but the shadows if they were never allowed to move their heads?" — Plato, Republic, Book VII

In the summer of 2022, while the rest of the AI world was still absorbing the implications of large language models, Yann LeCun published a document that read less like a research paper and more like a manifesto. Titled “A Path Toward…