Roads to a Universal World Model: The Video Gravity

When five approaches to understanding the physical world start merging into fewer, the map changes.

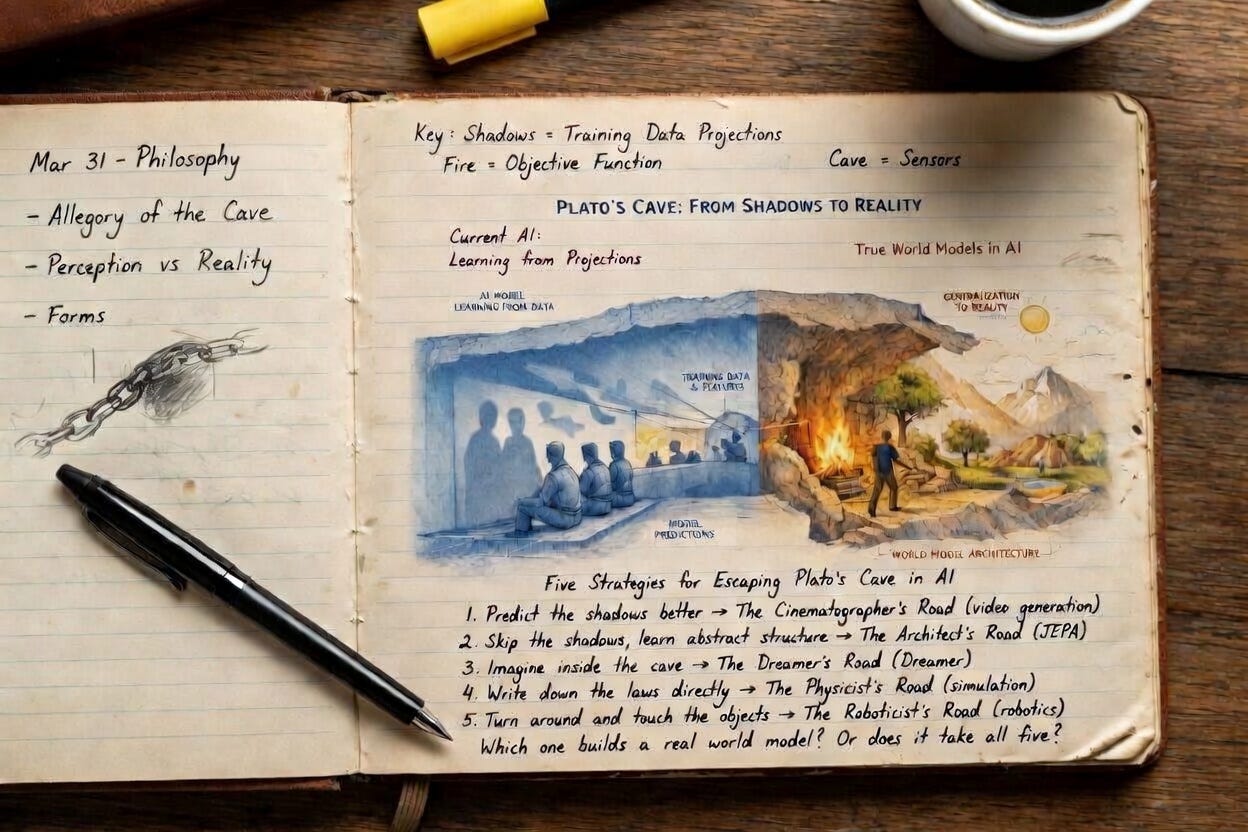

In the original Roads series, I mapped five distinct research traditions pursuing the same goal: a universal world model that understands how the physical world works. Five strategies for escaping Plato’s Cave, each with its own theory of what matters most. Predict the shadows better. Skip the shadows entirely. Imagine inside the cave. Write down the laws of physics directly. Turn around and touch the objects.

Those roads were parallel. They are not parallel anymore.

Over the past six months, the most striking papers in world model research have not stayed in their lane. They have blurred the boundaries between roads. Video diffusion models that directly control robots. Imagination training that works on physical hardware, not just in Minecraft. Video simulators that replace hand-authored physics engines for policy evaluation. The roads are converging, and the force pulling them together is video.

This is important because convergence changes the strategic map. When roads were parallel, betting on the right road was the critical decision for labs, startups, and investors. If the roads are merging, the critical decision shifts: not which road to take, but which layer of the emerging stack to own.

Here is what the convergence looks like, road by road.

The Cinematographer’s Road: from film school to robotics lab

The Cinematographer’s Road has always been the most crowded. Video generation models trained on internet-scale data learn temporal causality, object interactions, and implicit physics simply by predicting the next frame. The question was always whether that knowledge could transfer to robot control, or whether video models would remain impressive but useless for anything beyond generating clips.

In early 2026, three papers from NVIDIA answered this question in three different ways.

DreamZero built a World Action Model that jointly predicts future video frames and robot actions from a single 14-billion-parameter diffusion backbone. No separate inverse dynamics module, no multi-stage pipeline. One model, both outputs. The result: more than double the generalization of the best VLAs on unseen tasks, and cross-embodiment transfer to a new robot with only 30 minutes of unstructured play data. DreamDojo took a different approach to the same road. Instead of merging video and action into one model, it built a foundation world model pretrained on 44,000 hours of egocentric human video, the largest dataset ever assembled for world model training. The key innovation: continuous latent actions extracted from video, which serve as a hardware-agnostic proxy for robot motor commands. Humans have already explored the combinatorics of physical interaction. DreamDojo turns that exploration into a robot simulator that runs at 10 frames per second.

Cosmos Policy asked the simplest question: what if you just fine-tuned the video model and changed nothing? Take Cosmos-Predict2, treat robot actions as additional latent frames in the existing diffusion process, and post-train on demonstration data. No architectural modifications. LIBERO: 98.5%. RoboCasa: 67.1%. Real-world bimanual tasks: 93.6%. State-of-the-art, from simplicity alone.

Three papers, three strategies, one road. A new architecture. A new dataset. Zero modifications. All three work. The Cinematographer’s Road is no longer asking whether video knowledge transfers to robotics. It is debating how.

The Dreamer’s Road: from Minecraft to the factory floor

Danijar Hafner’s DreamerV4 proved that an agent can train entirely inside its own imagination. In Minecraft. The agent never plays the game during training. It watches recorded videos, builds a world model, and practices inside that model’s predictions. The result: the first agent to obtain diamonds in Minecraft from purely offline data, through a sequence of roughly 20,000 actions.

The question left open was whether imagination training could survive contact with the real world, where physics is unforgiving and errors compound into hardware damage.

RISE answered this question directly. Built by a team spanning CUHK, Kinetix AI, HKU, Tsinghua, and Horizon Robotics, RISE trains robot policies via reinforcement learning inside a Compositional World Model. The world model predicts multi-view futures from proposed actions. A separate progress value model scores those futures. Together they produce advantage signals that drive policy improvement without a single physical trial-and-error cycle. Dynamic brick sorting on a moving conveyor belt: +35% over prior methods. Backpack packing with soft deformable objects: +45%. Box closing with precise bimanual coordination: +35%.

The Dreamer’s Road has left the game engine.

The Architect’s Road: the dissent

Every road described so far works in pixel space. Whether through video diffusion or latent dynamics decoded to frames, the output is visual and the predictions are grounded in what the world looks like. The Architect’s Road refuses this premise.

V-JEPA 2, from Meta FAIR, predicts the future in abstract embedding space. It is not trained to reconstruct a single pixel. The claim: pixel prediction wastes capacity on irrelevant detail. The exact texture of a tablecloth, the flicker of a shadow, the grain of a wooden surface. None of it matters for understanding that a cup will fall if you push it off the edge.

The approach works. V-JEPA 2 trains on over one million hours of internet video, then adds just 62 hours of unlabeled robot data. It deploys zero-shot on Franka arms in labs it has never seen, picking and placing objects with no task-specific training. Planning runs about 16 seconds per step. Cosmos, a generative world model, takes four minutes.

But the Architect’s Road has its own problems. Training JEPA models end-to-end from pixels is notoriously unstable. The model collapses: it maps all inputs to the same embedding and declares prediction loss solved. LeWorldModel, the first JEPA to train stably end-to-end from raw pixels, solved this with a two-term loss and a single tunable hyperparameter. Fifteen million parameters, trainable on a single GPU in a few hours.

And V-JEPA 2’s planning capabilities remain limited to short-horizon manipulation. Pick and place, not fold a shirt. ThinkJEPA addresses this by fusing a JEPA dynamics branch with a vision-language model “thinker” that provides semantic guidance over longer time horizons. The JEPA branch handles the physics. The VLM branch handles the meaning.

Three papers in quick succession: what JEPA can do, how to make it trainable, and where it needs to go next. The Architect’s Road is building fast. But it is building alone. While the Cinematographer’s Road and the Physicist’s Road converge toward video, the Architect’s Road insists that video is the wrong medium. The bet is that predicting in latent space will eventually outperform predicting in pixel space, and that the current dominance of video diffusion reflects scale advantages, not architectural superiority.

This is the defining disagreement in the field. It has a name: the LeCun Bet. AMI Labs, the startup LeCun launched after leaving Meta, raised $1.03 billion to pursue it. The empirical gap between the two camps remains the most important open question in world model research.

The Physicist’s Road: under invasion

Traditional simulation builds physics from equations. Every contact, every friction coefficient, every deformation must be hand-authored. It scales with engineers, not with compute.

Google DeepMind’s Veo Robotics suggests this road is being absorbed by the Cinematographer’s Road. The system fine-tunes Veo2 on action-conditioned robot data and uses it for the full spectrum of policy evaluation: nominal performance, out-of-distribution generalization, and safety red-teaming. A language model edits a scene description, the video model rolls out the policy in the edited scene, and the system identifies unsafe behaviors that are then confirmed on real hardware. Bleach near electronics, scissors on a laptop, a human hand in the gripper’s path. All discovered in simulation, all replicated in reality.

For policy evaluation, Veo Robotics needs no hand-authored physics and no manually curated assets. The physicist’s equations are becoming the cinematographer’s pixels.

This does not mean physics-based simulation is obsolete. High-precision contact dynamics, deformable objects, and fluid interactions still exceed what video models can reliably predict. But the boundary is moving. Every improvement in video model fidelity shrinks the domain where physics engines are irreplaceable.

What convergence means

The convergence pattern has a clear structure. Video diffusion is the gravitational center. The Cinematographer’s Road contributes the medium. The Dreamer’s Road contributes the training paradigm. The Physicist’s Road contributes the evaluation framework. And the Roboticist’s Road? In recent months, its most visible papers live at intersections. DreamZero lives at the intersection of video generation and robot control. RISE lives at the intersection of imagination training and physical manipulation. The Roboticist’s Road became the destination that every other road is converging toward.

One road stands apart. The Architect’s Road bets that the entire convergence is a local optimum. That predicting pixels will hit a ceiling. That the right answer is not better video generation but no video generation. The evidence so far is mixed. The generative camp has DreamZero folding shirts. The JEPA camp has a 15x planning speed advantage.

The strategic implication for the field: the question is no longer which road leads to a universal world model. It is whether the universal world model generates pixels or abstracts them away.

Note: Individual Road Notes for each paper cited above are published in the World Model Research Club on X (@robonaissance).

Makes you wonder if the convergence was inevitable all along. Thanks for the post.