Roads to a Universal World Model, Part 6: The Convergence

Where the roads meet, and what we find there

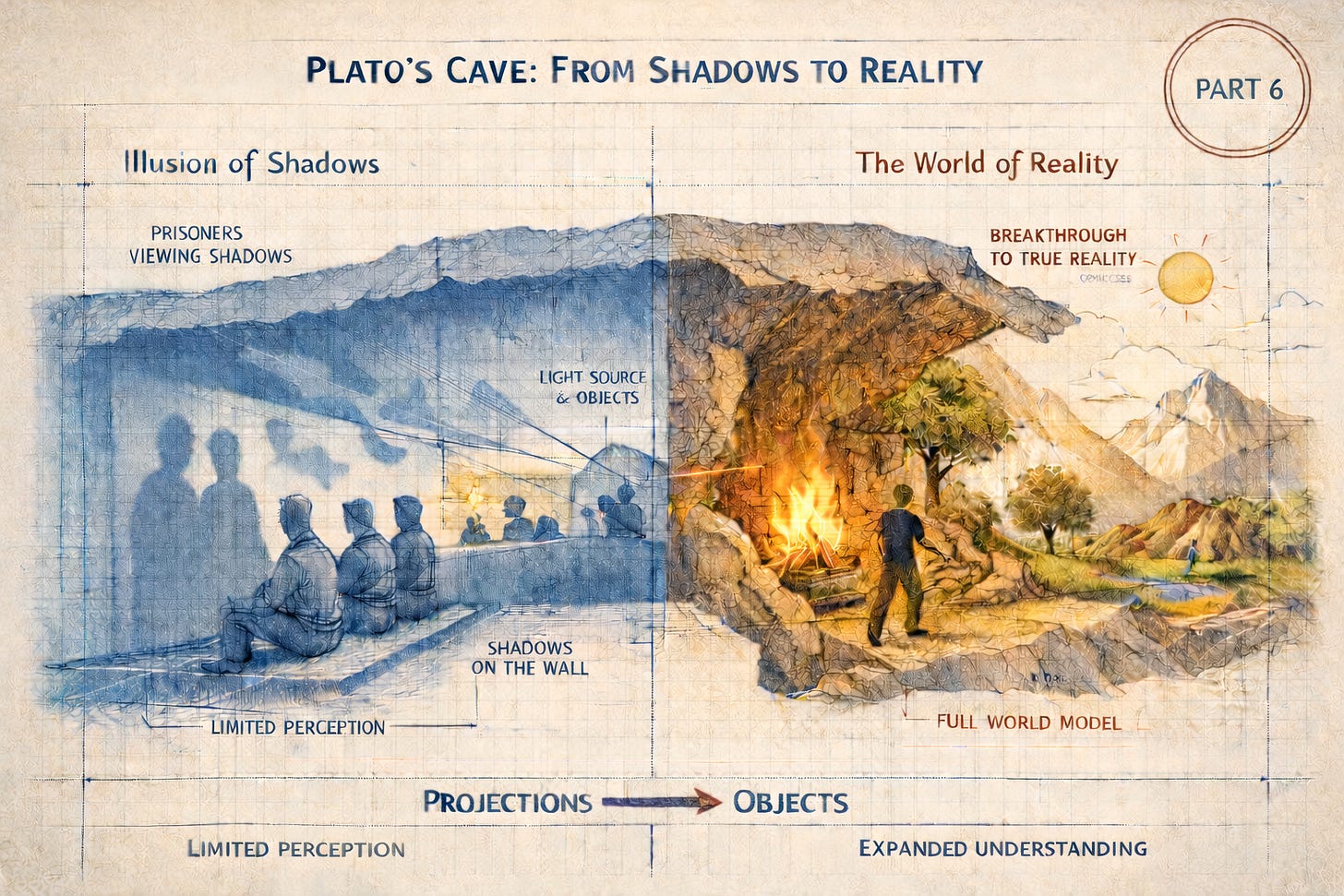

"If the organism carries a 'small-scale model' of external reality and of its own possible actions within its head, it is able to try out various alternatives, conclude which is the best of them, react to future situations before they arise." — Kenneth Craik, The Nature of Explanation, 1943

By early 2026, every major AI organization was building world mo…