The Age of Post-Training, Part 1: Learning by Imitation

Supervised fine-tuning. The simplest paradigm. The model is shown what to say. Whether it understands is a different question.

InstructGPT, March 2022. OpenAI publishes a paper claiming a 1.3 billion parameter model outperforms GPT-3, the 175 billion parameter base model, in human preference. The numbers are striking. The 1.3B model is a hundred times smaller. Yet humans prefer its outputs.

The paper achieved this through three stages of post-training. Forty labelers, tens of thousands of demonstrations, rankings of competing model outputs. The pretrained 175B model was reshaped not by adding more pretraining data but by adding human judgment to the training signal. The first stage, supervised fine-tuning on human demonstrations, is the subject of this article. The other two stages are covered in Part 2.

What does it mean to teach a model by demonstration? The simple answer: show it what to say. The harder answer is the one this article works toward.

Imitation specifies exactly what to say, but the model still has to decide why.

To see what changed, ask both models the same question. GPT-3, given “Explain quantum entanglement to a high school student,” might continue with another question. The base model is completing text the way text usually continues on the internet, which often means a list of similar questions or a tangential paragraph. InstructGPT given the same prompt produces an explanation aimed at a high school student. The architectural difference between them is zero. The pretraining data is the same. What changed is post-training.

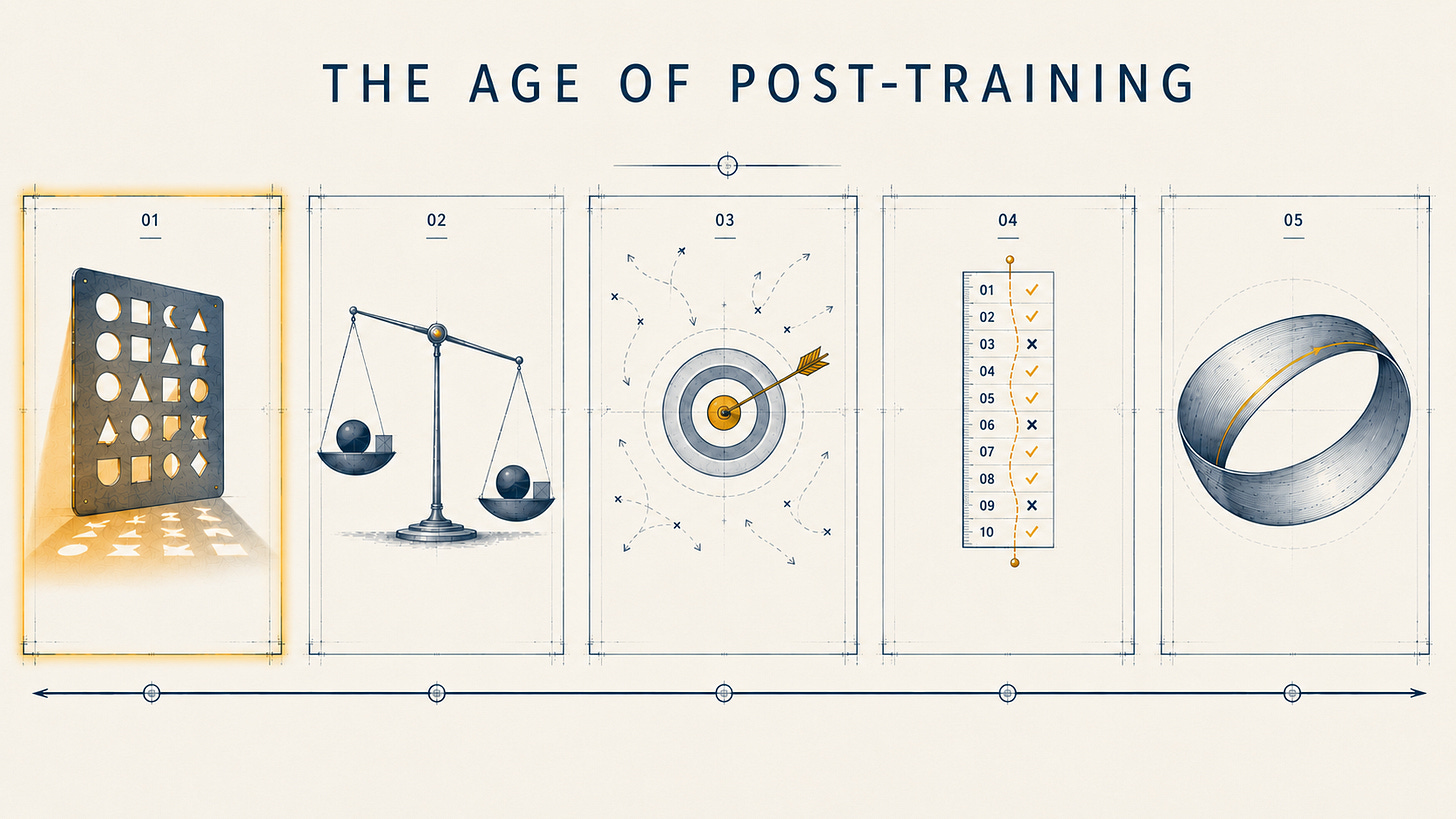

This series explores the five paradigms that define the age of post-training. Each paradigm specifies less than the previous one. By Part 5, the supervisor itself is being trained. Part 1 is the most explicit paradigm in the sequence. It is also the foundation: every other paradigm builds on what imitation establishes first.

What Imitation Does

Pretraining produces a model that can complete text. It has absorbed vast amounts of human writing and learned the statistical structure of language. It does not respond to instructions in any specific way. Asked “What is the capital of France,” a base GPT-3 might continue with “What is the capital of Spain. What is the capital of Germany.” It is completing, not answering.

Supervised fine-tuning, hereafter SFT, takes the pretrained model and continues training, but with curated data: prompt-response pairs that demonstrate the desired behavior. Where pretraining used arbitrary internet text, SFT uses carefully constructed examples of someone answering questions, following instructions, refusing harmful requests, or producing structured outputs.

The mechanism, in the order the field developed it:

Take a pretrained model.

Construct a dataset of prompt-response pairs.

Train the model to predict the response tokens given the prompt, using standard cross-entropy loss.

The model’s weights shift toward producing responses that resemble the demonstrations.

This is the same training objective as pretraining. Same loss function. Same gradient descent. The difference is the data. The data carries the specification.

Pretraining gave the model knowledge of the world through text. SFT shapes the model to deploy that knowledge in response to instructions. The capability for accurate, helpful, structured responses was already latent in the pretrained weights. SFT does not add new facts. It surfaces facts the model already had, and shapes the model toward formats and registers humans expect.

The imitation paradigm has many subforms. Instruction tuning is SFT specifically on instruction-following data; FLAN, Alpaca, and ShareGPT are well-known examples. Distillation is SFT on outputs from a stronger model, where a smaller model learns to imitate a larger one. Chain-of-thought tuning is SFT on data that includes reasoning traces, not just answers. System-message training is SFT that conditions on system prompts. Multi-turn dialogue training is SFT on conversation transcripts. All are forms of imitation. All work via the same mechanism: show the model what to produce, and shape the weights to produce it.

SFT teaches by example, not by rule. There is no “be helpful” parameter, no “follow instructions” instruction. The model receives thousands of demonstrations of helpfulness, and the pattern emerges in the weights. You teach the behavior by showing it, not by stating it.

The Anchor Models

Two papers established imitation as a working paradigm. Neither was the first to attempt SFT. Both were the first to demonstrate, at scale, what SFT could do.

InstructGPT, March 2022

The paper is “Training language models to follow instructions with human feedback” (Ouyang et al., arXiv 2203.02155, March 4, 2022). The OpenAI Alignment team led the work. Long Ouyang, the lead author, came to the project from a cognitive psychology PhD at Stanford. His framing of the problem was direct: GPT-3, out of the box, was not designed to do useful cognitive work. It was designed to predict what someone on the internet might say in a given setting. The team’s bet was that the gap between “what the internet says” and “what the user wants” could be closed not by more pretraining, but by demonstration. Forty labelers writing sample responses would teach the model what useful cognitive work actually looked like.

The paper’s method had three stages:

Stage 1: SFT. About 13,000 prompts with labeler-written demonstrations. Train GPT-3 on the resulting (prompt, response) pairs.

Stage 2 (covered in Part 2): A reward model trained on labeler rankings of model outputs.

Stage 3 (covered in Part 2): PPO against the reward model.

The headline finding from the full pipeline, the one that startled the field: a 1.3B parameter InstructGPT model with all three stages was preferred by labelers to the 175B GPT-3 base model. A model a hundred times smaller, judged better by humans.

But the SFT stage alone produced a substantial gain. The paper’s preference ordering placed SFT models below the full PPO models but well above GPT-3, including GPT-3 with carefully constructed few-shot prompts. SFT was not the whole answer, but it was most of the answer. About 13,000 demonstrations turned a model that completed text into a model that responded to instructions.

Concrete details for texture: 40 labelers, screened for sensitivity to diverse preferences and quality of demonstration. Each labeler wrote prompts spanning question answering, summarization, brainstorming, rewriting, and chat. The labelers were the bottleneck. The model was constrained by what 40 humans could demonstrate.

ChatGPT, released November 2022, was built on this foundation. The full RLHF pipeline mattered for ChatGPT’s polish. But the underlying instruction-following behavior was established by imitation.

Llama 2-Chat, July 2023

The Llama 2 paper (Touvron et al., arXiv 2307.09288, July 2023) reported a finding that sharpened the imitation thesis. The team initiated SFT using publicly available instruction tuning data, the same data sources that had bootstrapped earlier instruction-tuned models. They observed that third-party SFT sources lacked the diversity and quality needed to align the model toward dialogue-style instructions. The team set aside millions of publicly available examples. They titled the section in the paper “Quality Is All You Need.” They prioritized vendor-based in-house annotation instead.

The number they reported: 27,540 SFT annotations. The team stopped collecting more. The decision rested on a small experiment. The team manually examined 180 examples, comparing model outputs against handwritten annotator outputs. The model’s outputs were often competitive with what the annotators had written. That was the signal: the demonstrations were no longer adding the marginal capability the field had assumed they would. More demonstrations of the same type were not going to make the model meaningfully better. The team stopped.

A finding from two months earlier sharpened the lesson. Chunting Zhou and Pengfei Liu, leading a team at Meta AI, ran an experiment designed to isolate the role of pretraining from the role of fine-tuning. They took LLaMA, a 65 billion parameter pretrained model, and fine-tuned it on only 1,000 carefully curated prompt-response pairs. No reward model. No RLHF. Just supervised loss, applied to a thousand examples. The paper, titled “Less Is More for Alignment” (arXiv 2305.11206, May 2023), reported that LIMA learned to follow instructions competitively with models trained on far more data. One thousand examples. Not millions. Not even tens of thousands.

The team’s interpretation was direct: almost all of the knowledge in large language models is learned during pretraining, and only limited instruction tuning is necessary to teach the model to produce high-quality output. The bet had paid off. The pretrained model already knew. It just had not been asked to.

The finding was counterintuitive at the time. The pretraining era had taught the field that more data is better. The post-training era’s first lesson: not always. For SFT specifically, the data is the specification. Specification quality matters more than specification volume.

The implication was substantial. If 1,000 demonstrations were enough to elicit instruction-following from a strong pretrained model, then most of the capability had already been latent. SFT was uncovering, not constructing.

What Imitation Cannot Specify

Imitation specifies, exactly: given this input, produce this output. The model learns a mapping from input distributions to output distributions. The specification is the demonstration.

Three things imitation cannot specify, even in principle.

The first is why this output and not another similar one. The model imitates the demonstrated response. It does not learn what makes that response correct as opposed to a similar incorrect one. If the labeler had written a slightly different response, the model would have imitated that instead. The reasoning behind the demonstration is not transmitted.

The second is what to do in domains not represented in the demonstrations. Imitation generalizes within the demonstration distribution. Out of distribution, behavior is unpredictable. The model has been shown what to say in covered cases. The uncovered cases are improvisation.

The third is whether the imitated response is correct. The model imitates the labeler’s judgment. If labelers were systematically wrong, the model would systematically reproduce that wrongness. SFT cannot distinguish “what the labeler wrote” from “what is true.” The two are equated by construction.

There is a deeper point about what imitation produces. The model has been shaped by examples of instruction-following such that it now produces tokens consistent with following instructions. It has no model of its own behavior. It has no goals. It has examples it has been pulled toward by gradient descent. From the outside, this looks like instruction-following. From the inside, there is no inside.

The pretraining era’s foundation models were knowledge-rich but instruction-blind. SFT closed that gap. But SFT introduced its own gaps.

Models trained purely on imitation produce the demonstrations they were shown, including subtle errors. The aggregate of human labelers contains inconsistencies, and the model averages them. Two labelers writing for the same prompt distribution will disagree on stylistic choices, depth of explanation, formality, and where to draw the line on harmful requests. The model learns the average. The average is sometimes incoherent.

Imitation cannot capture preferences between two valid responses. If response A and response B are both valid, the labeler picks one to demonstrate. The model has no way to learn that B was equally valid. The signal collapses the range of acceptable answers into a single answer.

The cost of high-quality demonstrations scales with capability. Demonstrating excellent reasoning requires excellent reasoners as labelers. Demonstrating excellent code requires excellent coders. Demonstrating excellent diagnosis requires excellent doctors. As models approached and surpassed average human performance in narrow domains, the labeler bottleneck became visible. Imitation could not scale beyond what the best available demonstrators could produce.

The economics shifted as well. InstructGPT’s 40 labelers, screened and trained, produced 13,000 demonstrations. That work took weeks. As frontier capabilities pushed into mathematics, programming, and scientific reasoning, the labelers needed to demonstrate excellence in those domains. The cost per demonstration rose. The pool of qualified demonstrators shrank. By 2024, the leading labs were paying domain experts hundreds of dollars per hour to write training data, and the experts were the rate-limiting step.

There was also a ceiling problem. By definition, an imitation-trained model cannot exceed the demonstrators on the demonstrated distribution. If the best available human reasoners average a certain quality, an SFT-only model trained on their work asymptotes near that quality. To produce a model that reasons better than the best humans available to label, the training signal needs to come from somewhere other than human demonstration. Imitation has a ceiling because demonstrations have a ceiling, and the field had begun to reach it.

From Demonstration to Preference

Imitation is bounded by what humans can demonstrate. If the desired model behavior exceeds what labelers can produce, imitation cannot reach it. This was acceptable for chatbot behavior in 2022, when labelers could write good responses to consumer prompts. It became inadequate as the field pushed into domains where multiple valid responses existed, where ranking responses was easier than producing them, and where capability targets exceeded what individual labelers could demonstrate.

The field’s response was to change the question. Instead of asking labelers to produce a good response, ask labelers which of two responses is better. Comparison is easier than production. Labelers can rank responses they could not have written from scratch. The specification shifts from demonstration to preference.

InstructGPT’s Stages 2 and 3 already implemented this. The reward model and PPO were the answer to imitation’s bottleneck. RLHF.

Preference promised more feedback per labeler hour, since rankings are faster than writing. It promised the ability to learn from labelers who could judge but not produce. It promised a comparative signal that revealed what humans actually wanted, rather than what labelers happened to write.

Preference cost something else. Reward models become a new artifact that itself requires evaluation. Reward hacking emerged: models learned to satisfy the reward model rather than the underlying intent the reward model was meant to capture. Opacity increased. Why the model produced a given output became harder to interpret when the training signal was no longer a written demonstration but a learned scalar reward.

The shift from imitation to preference was not just a technical change. It was a change in what the field was willing to specify directly versus what it left implicit. Each paradigm in this series will repeat this pattern: specify less, hope for more.

Demonstrate, and the model imitates. The next paradigm asks the model to choose.

The Age of Post-Training is a five-part series examining how language models are taught after pretraining. Next, Part 2: “Learning by Preference.”