The Making of OpenClaw

Thirteen years in Vienna shaped the instincts. Three years of burnout proved the cost. A weekend hack passed Linux in three months. The architecture won. The questions about who runs what just arrived

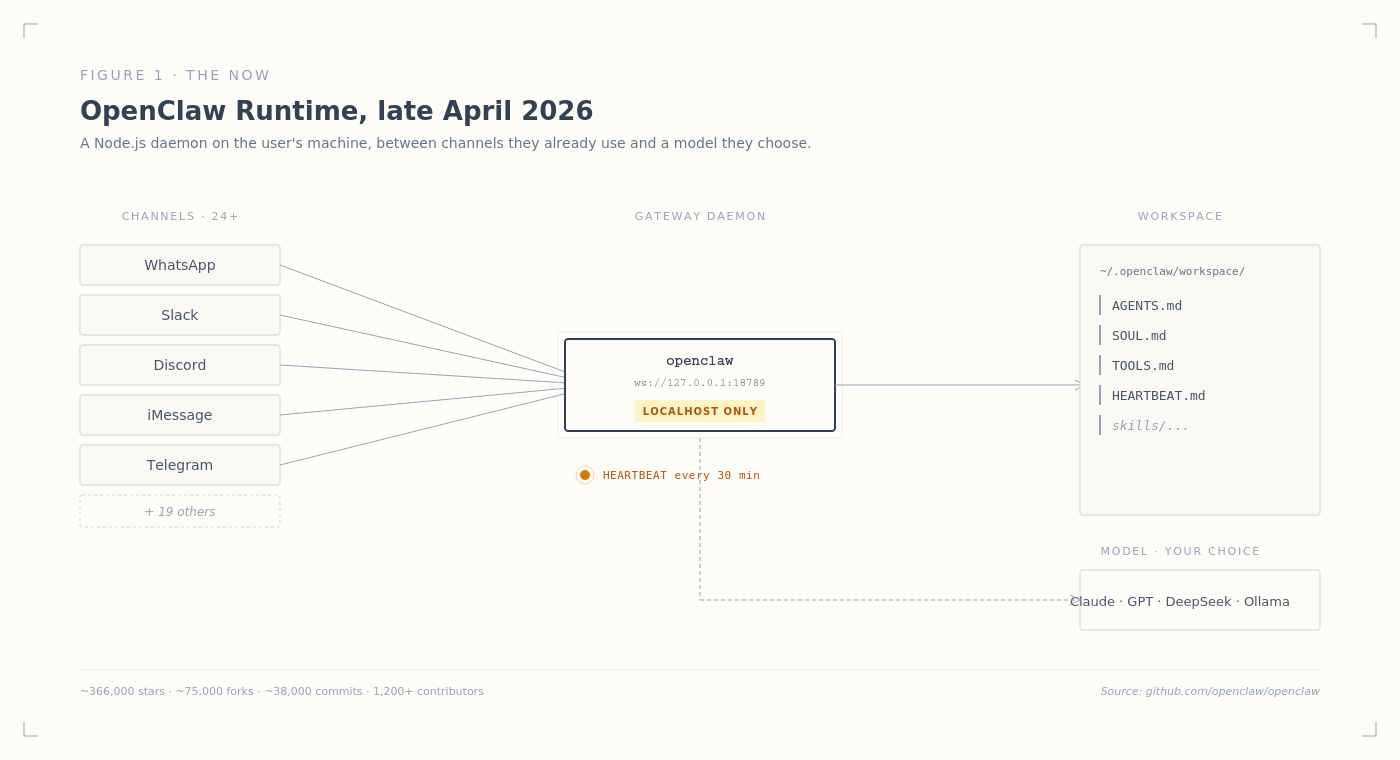

Open the README of one of the fastest-growing personal-AI repositories on GitHub. The first install command is npm install -g openclaw@latest. The runtime recommends Node 24. The default Gateway port is 18789, bound to localhost. There is no signup, no API key for the platform itself, no vendor account. The whole product is a process that runs on the user’s machine. The agent’s identity lives in three plain-text files called AGENTS.md, SOUL.md, and TOOLS.md, sitting in a folder at ~/.openclaw/workspace. If something breaks, you can diff your way back to a working version. If you want to migrate the agent to another machine, you copy a folder. The whole agent is something you could attach to an email.

As of late April 2026, OpenClaw has roughly 366,000 stars on GitHub, more than 75,000 forks, around 38,000 commits to its main branch, and a contributor count above 1,200. In early March 2026 it surpassed Linux on the GitHub stars leaderboard. In one analysis, React took thirteen years to accumulate the kind of star count OpenClaw reached in roughly one hundred days. The repository is governed by an independent foundation. Sam Altman’s company OpenAI sponsors it. Sam Altman does not control it. The author has stepped back from day-to-day governance.

The author is Peter Steinberger, an Austrian developer who spent thirteen years before OpenClaw building a PDF framework called PSPDFKit, sold it after a nine-figure deal with Insight Partners, and then spent three years unable to write a line of code. He has described the period publicly. He booked a one-way ticket to Madrid. He tried therapy and ayahuasca. He used the analogy of Austin Powers having his mojo extracted and confessed, on a recent Lex Fridman interview, that he could not get code out anymore and was just staring and feeling empty. He came back to building in late 2024 because Claude Code crossed a paradigm shift while he was away, and code-writing started to feel less like grinding and more like playing a computer game. He shipped forty-something AI-related side projects through 2025. The forty-third of them was a weekend hack he called WhatsApp Relay. It hit nine thousand GitHub stars in twenty-four hours. Within three months it would be renamed twice, sit at the top of the GitHub stars leaderboard, and be moved into a non-profit foundation while its author left to lead personal agents at OpenAI.

What is non-obvious is the shape of the platform that produced these numbers. Most AI agent products in 2025 and 2026 chose vertical integration. Anthropic ships Claude with its own agentic products. Google ships Gemini across its product portfolio. Meta ships agents inside its messaging products. OpenAI itself, where Steinberger now works, is building closed personal-agent products on its own infrastructure. The dominant pattern is owned: own the model, own the memory, own the tools, own the UI, own the customer. OpenClaw refused all five. The model is whatever you point it at. The memory is a folder of markdown files on your hard drive. The tools live in another folder. The UI is whichever messaging app you already use, from WhatsApp to Discord to iMessage to WeChat. The customer is whoever installs the npm package. Each refusal looks, from the outside, like a strategic concession. Each was made by a developer building for himself, with the conscious intention to give away the parts a startup would normally keep.

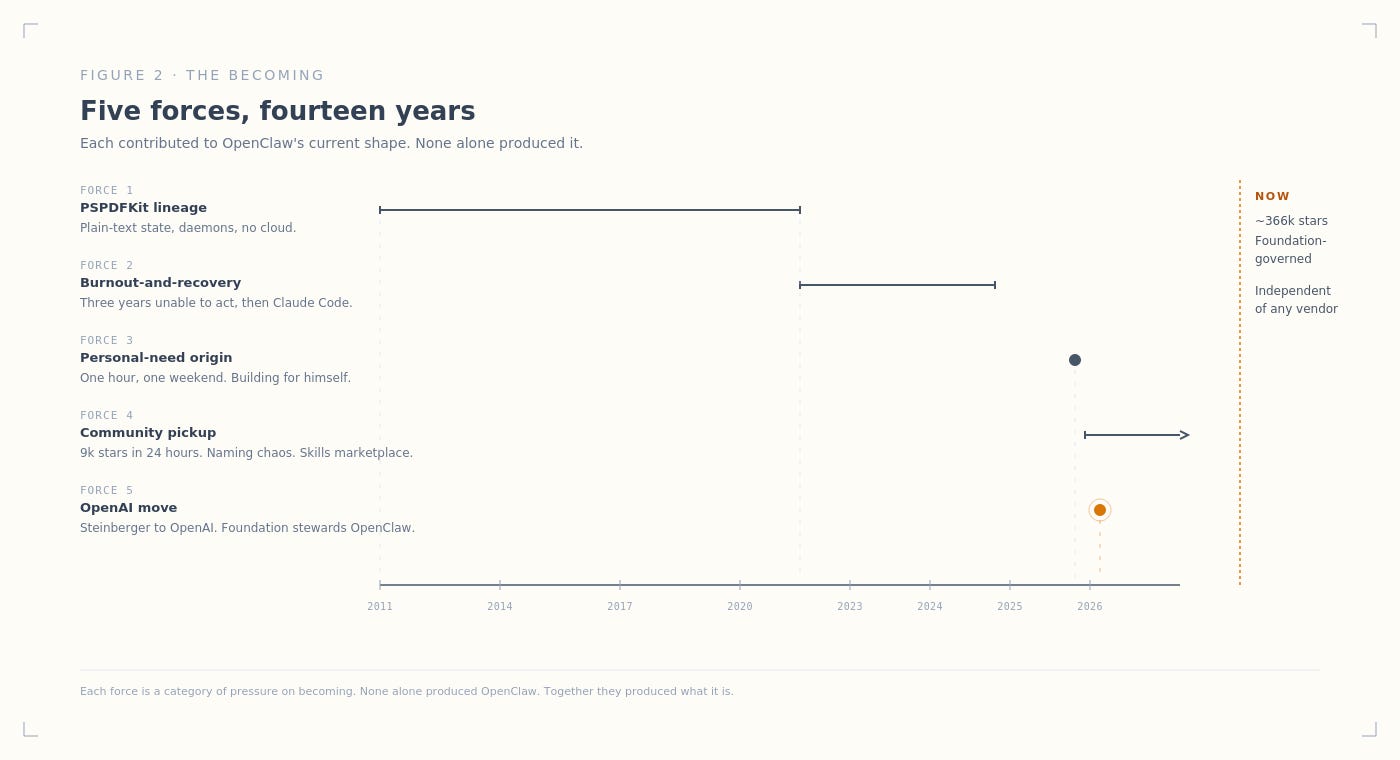

The convictions did not arrive when Steinberger sat down at a terminal in November 2025. They were already in place before OpenClaw existed. The choices that produced its current shape were made over thirteen years of building software, three years of being unable to build anything, twelve months of rebuilding the habit through small AI projects, one hour of typing a prototype that worked, and three months of public frenzy that compounded the project into something its author could no longer fully control.

How It Became

OpenClaw exists in the shape it does because of five compounding forces over fourteen years. The first is older than the company itself.

Steinberger started PSPDFKit in 2011, while waiting six months for a U.S. work visa to take a job offer made at a WWDC after-party. The visa took longer than expected. He filled the time with a side project for a friend who needed reliable PDF rendering on iPads. PDF turned out to be the kind of format that punished casual implementations: thousands of pages of specification, edge cases everywhere, performance requirements that broke most attempts. Steinberger built a solution that worked. When his manager noticed his health declining from running both the day job and the side project, he was given a week to choose. He chose his own company, which meant leaving the United States within a week because his visa was tied to his employer. He returned to Vienna and co-founded PSPDFKit with Martin Schürrer.

For the next thirteen years, PSPDFKit grew without external funding into a Vienna-based software company whose code ran inside applications used by Apple, Dropbox, SAP, Volkswagen, DocuSign, and Autodesk. Across all of those applications, the framework was running on customers’ devices, inside the customers’ apps, under the customers’ control. Steinberger was building the kind of infrastructure that gets installed once and run inside other people’s products for years. The instinct that produced PSPDFKit was the same instinct that would later produce OpenClaw: build infrastructure customers control on their own machines, not services they rent from someone else’s cloud. In October 2021, Insight Partners closed an investment of approximately one hundred and sixteen million dollars in PSPDFKit. Steinberger and Schürrer stepped down from full-time roles. By his account, after thirteen years of working most weekends, he had given the company the kind of attention that left him completely broken.

What followed was three years that nearly produced a different ending. Steinberger has described the period publicly. The exit, by every external metric, was a triumph; internally, it left him hollow. He used the analogy of Austin Powers having his mojo extracted, in a Lex Fridman interview that has become a touchstone for founder-burnout discussions. He could not write code. He sat at the computer staring. He booked a one-way ticket to Madrid and tried to make up for thirteen years of life he had not lived. He moved cities. He attended parties. He did therapy. He tried ayahuasca. He has written, on his blog, that he eventually understood the truth most post-exit founders learn: you cannot find purpose by relocating. You have to create it. None of the rest of OpenClaw exists if Steinberger does not come back from this period. The recovery was not pre-determined. Founders who experience this kind of post-exit emptiness sometimes never come back to building. The Madrid retreat was open-ended. He could have stayed retired.

He came back, late in 2024, because of Claude Code. The recognition was specific. He sat down at a terminal for the first time in a long time, prompted Claude Code to do something, and watched the system do it. The bottleneck, he realized, had moved. The repetitive plumbing that had drained him for thirteen years could now be delegated. The work he had loved in the early PSPDFKit years, structuring problems and orchestrating systems, was now what mattered. Building software felt like playing a computer game again. In November 2024, he tweeted that he was back. What followed was twelve months of sustained shipping. Forty-something AI-related projects went onto GitHub: Peekaboo for screenshot automation, VibeTunnel for browser-to-terminal bridging, Brabble as a wake-word voice daemon, gogcli as a Google services CLI, Poltergeist as a build-watcher. Most of them were small. None of them broke through. They were the price of admission to find the one that would.

The forty-third was OpenClaw. By November 2025, Steinberger had been thinking on and off for more than eighteen months about a personal AI assistant. He had first conceived the idea in April 2024 and shelved it because he assumed major companies would inevitably ship something equivalent. By late 2025 he realized the major companies had not. Apple Intelligence had shipped without anything an Apple user would recognize as a personal agent. Google Gemini was integrated across Google’s products but not into the messaging apps people actually used. ChatGPT was a chat interface, not an assistant that did things across an existing digital life. The gap remained open. He sat down at a computer one weekend, prompted Claude Code to glue WhatsApp to an LLM, and had a working prototype in roughly an hour. He uploaded it to GitHub under the name WhatsApp Relay. Within twenty-four hours, the repository had nine thousand stars. The choices baked into that hour, including the localhost binding, the markdown-based agent definition, the channel-agnostic architecture, the heartbeat loop that lets the agent alert users on its own clock, were the choices a developer building for himself would make. Steinberger did not survey a market. He scratched his own itch with the tools he wanted to use, on the channels he was already using, with his data on his own machine. The platform survived its author’s needs into broader adoption because the needs turned out to be broader than he realized.

The community took it from there. Within weeks the renames began. Steinberger had originally called the project Clawdbot, a play on Claude with the lobster monster mascot users see when reloading Claude Code. Anthropic’s legal team raised trademark concerns. A late-night Discord brainstorm produced Moltbot, a reference to lobsters molting their shells to grow. During the panic of renaming his GitHub account, automated bots sniped his old handle within minutes; cryptocurrency scammers used the freshly-released handle to promote fraudulent tokens within ten seconds. Three days later he renamed the project a third time, to OpenClaw, after checking with Sam Altman that the name would not conflict with OpenAI branding. The same day Moltbot rebranded as OpenClaw, an entrepreneur named Matt Schlicht launched Moltbook, a social network where AI agents could create profiles, comment on each other, and argue. The network was not Steinberger’s. It used OpenClaw as an enabling platform. The combination, agent-platform plus agent-social-network plus visible chaos, produced more attention than any of the three individually would have.

In February 2026, a team led by Irvin Steve Cardenas at Kent State University’s Advanced Telerobotics Research Lab won the SF OpenClaw Hackathon by building a bridge between OpenClaw and ROS 2, the Robot Operating System used as middleware for industrial and academic robotics. The project was called ROSClaw. Cardenas’s tweet announcing the win included the line “Agents escaped the screen!” and reached more than two hundred thousand views in the days after. The arXiv paper that followed documented the team deploying ROSClaw on three robot platforms, a wheeled robot, a quadruped, and a humanoid, with four different foundation-model backends. The paper required new integration layers OpenClaw had not been designed for: dynamic capability discovery, pre-execution action validation, structured audit logging. Robotics had not been on Steinberger’s roadmap. Neither had social networks for AI agents. Neither had Tencent and Z.ai shipping OpenClaw-based services into the Chinese consumer market. The current shape of OpenClaw is partly the result of decisions Steinberger never made. He provided a platform with the right shape; the world built what it wanted to build on top of it.

The last force was the institutional one. On February 14, 2026, three months after WhatsApp Relay shipped, Steinberger published a blog post announcing he was joining OpenAI to lead personal agents. OpenClaw, he wrote, would move into a non-profit foundation that OpenAI would sponsor financially while leaving model-agnostic and community-governed. Sam Altman confirmed on X the next day. Steinberger had spent the preceding week in San Francisco talking with the major AI labs; Meta and Anthropic were both reported to be courting him. He chose OpenAI because he had already spent thirteen years building a company and was not interested in doing it again, and because he believed OpenAI’s infrastructure was the fastest path to building the kind of agent his mother could use. The choice was not pre-determined. He could have accepted venture capital and built OpenClaw as a startup; he was financially free to choose. The foundation-governance shape of the current project, sponsored but independent, exists because he chose it over the closed-commercial alternative.

What the five forces produced together is a project shaped by five compounding choices made by Steinberger and by the world around him. The PSPDFKit lineage gave him architectural instincts he could not unlearn. The burnout-and-recovery gave him three years to be unable to act on the instincts, then Claude Code as a way to act fast when he came back. The personal-need origin gave him a target shaped by his own use rather than by a market. The community took the project in directions he never planned. The OpenAI move gave it institutional backing that could either preserve or compromise its independence. None of these forces alone produced OpenClaw. None was inevitable. Together they produced what OpenClaw is now.

The Commitments

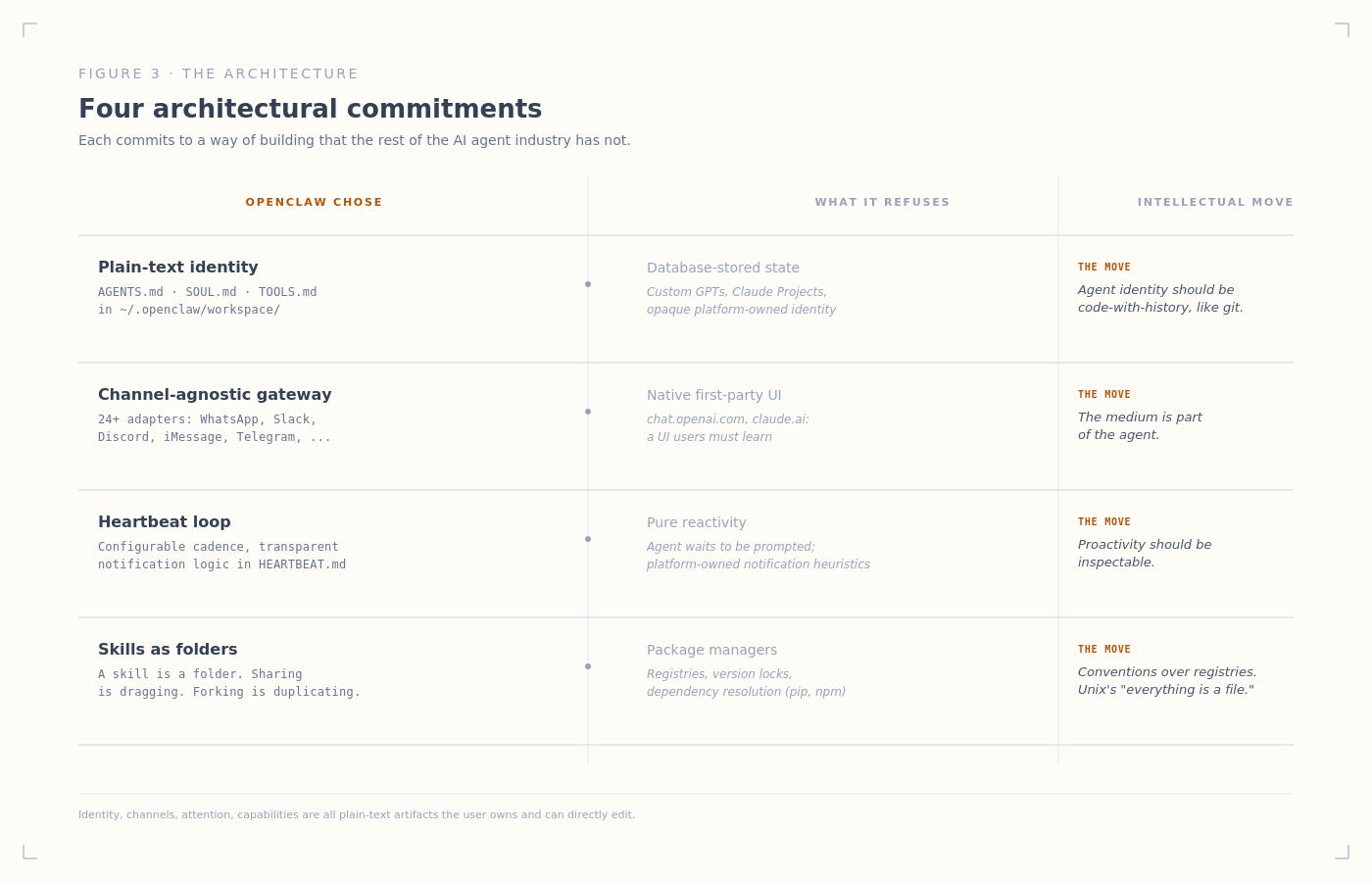

The forces produced a system. The system has a specific architecture, embodied in a set of architectural commitments. Each consequential design choice is a commitment to a way of building that the rest of the field has not made.

The agent’s identity is plain text

What it is. Three files sit in ~/.openclaw/workspace: AGENTS.md, SOUL.md, and TOOLS.md. Each has YAML frontmatter for metadata and Markdown for content. At startup, the daemon reads these files. The agent’s identity, personality, and toolkit are loaded from the file system.

What it refuses. Most personal-agent platforms store agent identity in databases the platform owns, or in proprietary serialization formats users do not see. Anthropic’s Claude Projects, OpenAI’s Custom GPTs, and most agent frameworks use opaque state users cannot directly inspect or edit.

Why this works. Plain text is diffable. Diffable means version-controllable in git. The agent’s identity then has the same operational properties as code: forkable, mergeable, attributable, recoverable. SOUL.md as plain text means a non-developer can read the file and see the agent’s values. The agent’s behavior is not opaque; the parameters that determine it are visible. When something goes wrong, the user reads the file and sees why. When something needs to change, the user edits the file and reloads.

The intellectual move. Treating agent identity as code-with-history is the same move git made for source code in 2005. Git did not win because it had better merge algorithms. Git won because plain-text history made software collaboration possible at scale. OpenClaw is making the equivalent bet for agent identity: the system that wins will be the one whose state is plain-text, diffable, and version-controllable, not the one with the slickest serialization.

The channel is whatever the user already uses

What it is. The Gateway accepts messages from twenty-four channel adapters: WhatsApp, Slack, Discord, iMessage, Telegram, Signal, Microsoft Teams, Matrix, WeChat, IRC, and others. Each adapter normalizes its native message format into a common envelope and passes it to the agent. The agent never sees which channel a message came from.

What it refuses. Native UI. Most personal-agent products ship a chat interface and tell users to use it. ChatGPT lives at chat.openai.com. Claude lives at claude.ai. The default product shape is “agent in our UI.”

Why this works. Users do not live in chat.openai.com. They live in WhatsApp groups with family, Slack channels with colleagues, iMessage threads with friends, Discord servers with hobbyist communities. Asking users to leave the apps they already use compounds into churn. Channel adapters meet users where they are. A new channel is supported by writing an adapter; the agent does not need to be retrained or redeployed.

The intellectual move. The medium is part of the agent. An agent in your family WhatsApp group is a different agent than the same model running in chat.openai.com, because the social position of the channel shapes what the agent is being asked to do. Channel-agnosticism strips the channel from the agent’s awareness, making the social position emerge from the user’s choice of where to install the agent rather than from the platform’s choice of where to host it.

The proactivity is configurable

What it is. Every thirty minutes by default, with the cadence configurable per agent, the agent reads HEARTBEAT.md from its workspace, runs a reasoning step over the file’s contents, and decides whether to alert the user. The file can contain reminders, scheduled checks, threshold conditions, and free-form goals. If nothing needs alerting, the agent replies HEARTBEAT_OK and goes back to sleep, costing only one short LLM turn. If something does need alerting, the agent routes a notification to a configured channel.

What it refuses. Pure reactivity. Most chat-based AI agents respond when prompted and have no autonomous schedule. The user must remember to check in. Notifications, when they exist, are driven by opaque platform-side heuristics.

Why this works. Notifications-from-AI is a hard product problem. Too few notifications and the agent is useless; too many and the agent is annoying. The Heartbeat solves this by making the cadence configurable per agent and the notification logic transparent: the heuristic is a Markdown checklist the user can edit. The protocol is minimal. Two outcomes only. No state machine, no priority queue, no notification-management UI. The simplicity is load-bearing: it makes the system inspectable.

The intellectual move. Proactivity should be inspectable. Most AI-as-product hides the notification logic from the user, treating it as a platform concern. Heartbeat says the user owns the heuristic, in the user’s own filesystem, in plain text. The user becomes a programmer of the agent’s attention. This is the same move as the markdown-identity choice, applied at a different layer: do not hide the system’s logic from the user; expose it for editing.

Skills are folders, not packages

What it is. A skill in OpenClaw is a folder. Each folder contains SKILL.md with YAML frontmatter declaring the skill’s name, version, and triggers, plus Markdown describing what the skill does and how to invoke it. Skills can be bundled with the agent, installed globally, or stored in the workspace; workspace skills override globally-installed skills with the same name.

What it refuses. Package managers. Most extensibility systems for AI tooling use registries, version locks, and dependency resolution. The default for “extensible AI” is package management.

Why this works. Skills as folders makes the unit of extension portable in the most basic sense: a skill is something you copy. Sharing a skill with another OpenClaw user is dragging a folder. Forking a skill is duplicating the folder and editing. The agent loads the skill by reading the folder; there is no installation step, no dependency tree, no version conflict. The cost is that skills cannot have complex runtime dependencies. The benefit is that the skill ecosystem is comprehensible to a non-developer.

The intellectual move. Conventions over registries. The same instinct that built Unix’s “everything is a file” applied to agent capabilities. A registry is what you build when you do not trust users to manage their own dependencies. A convention is what you build when you do.

These four commitments, together, define what OpenClaw is at the architectural level: an agent whose identity, channels, attention, and capabilities are all plain-text artifacts the user owns and can directly edit. Each is a commitment to a way of building that the rest of the AI agent industry has not made. Together they produce a system whose behavior is inspectable, whose extensions are portable, and whose integration with a user’s existing digital life requires no new UI to learn.

What’s Becoming

Three tensions shape what OpenClaw is becoming. The first is the security cost of the architecture. Steinberger has told the story of his own agent SSH’ing into his computer one night and turning the volume to maximum to wake him up. The agent had inferred the goal from context, picked the means, and executed. He has used the moment as evidence that he was building something genuinely new. He has also, separately, used it as evidence that giving an AI access to a computer means giving it the ability to do anything a human could do, including things the human did not ask for.

OpenClaw’s threat surface is the same surface that makes it useful: a folder of skills, all editable, all running. ClawHavoc, in late January 2026, was the first public security incident at scale: attackers shipped malicious skill packages disguised as legitimate ones, and instances of OpenClaw exposed to the public internet downloaded them and ran them as if they were trusted code. Cisco’s AI security research team studied the skill ecosystem and found three hundred and forty-one malicious skills on the community marketplace, with a contamination rate around twelve percent. The team partnered with VirusTotal to scan future submissions. Some Silicon Valley firms responded by banning the program from work devices. The Chinese government, in March 2026, restricted state agencies, state-owned enterprises, and banks from using OpenClaw, citing data deletion, leaks, and energy usage concerns; in the same month, local governments in Chinese tech hubs announced measures to build OpenClaw-based industries. The contradictions are characteristic.

Whether the foundation can vet skills and manage security at the scale of more than a thousand contributors and a million-plus downloads is undecided. One of the project’s own maintainers, posting on Discord under the handle Shadow, has written that anyone who cannot run a command line should not be running OpenClaw at all. The warning is honest. It is also a tacit admission that the platform’s safety, today, depends on user expertise rather than platform-level guarantees. Whether that holds as the user base expands is a real open question.

The second tension is the structural one. Steinberger has joined OpenAI to lead the development of personal agents. OpenClaw lives in a foundation that OpenAI sponsors. OpenAI is, separately, building closed personal-agent products on its own infrastructure that compete in the same category as the open foundation it is funding. Sam Altman’s public framing has been that supporting open source is an important part of a future that is heavily multi-agent. Steinberger’s public framing has been that the foundation will stay model-agnostic, will continue to support Claude and GPT and DeepSeek and local models through Ollama, and will outlive him. Both framings can be sincere. They can also coexist with structural pressures that pull the project, over time, toward the sponsor’s preferred shape.

The post-Steinberger transition is the more immediate version of the same question. OpenClaw was, until February 2026, one developer’s project. The community knew the road map because it knew the developer. With Steinberger inside OpenAI, the foundation governs the project, but the foundation is new and its governance is not yet visible. Open-source projects that lose their founder often fragment, with forks proliferating and direction blurring. OpenClaw is at a scale where governance failure would cost the ecosystem real coherence. The foundation is the structural answer; whether it works will be visible in the consistency of releases and the resolution of disputes over the next year, not in any announcement.

The third tension is the question the architecture rests on. OpenClaw is the loose, open, model-agnostic counter-bet to a category that is consolidating around closed vertical stacks. Anthropic ships Claude with its own agentic products. Google ships Gemini across its product portfolio. Meta ships agents inside its messaging products. OpenAI is building its own personal-agent product even as it sponsors OpenClaw. The dominant pattern is owned: the model, the memory, the tools, the UI, and the customer relationship all live inside one company’s stack. The case for vertical integration is reliability: a closed stack can ensure the model, tools, and UI work together, can audit the system end-to-end, can ship safety updates as a unit. The case against it is the lock-in: once a personal agent has a year of your context, switching providers is costly enough to be theoretical.

OpenClaw represents the structural alternative. Open weights through whatever model you point it at, plain-text memory you own, tools that live in folders, channels you already use. The bet is that, given a year, the value the loose-coupled architecture creates by avoiding lock-in will outweigh the integration penalty. Whether the bet pays off is a category-level question. If it does, OpenClaw becomes part of the foundational infrastructure of personal agents, the way Linux became part of the foundational infrastructure of servers. If it does not, OpenClaw becomes a moment, a project that proved the open layer was conceivable before the vertical stacks consolidated around themselves.

These four commitments are also the constraints that determine whether the bet pays off. The plain-text agent identity makes the system inspectable; it also makes structured cross-device synchronization harder than database-backed alternatives. The channel-agnostic architecture meets users where they are; it also forecloses the kind of native rich-content experiences that closed vertical stacks can ship. The Heartbeat protocol makes proactivity configurable; it also leaves the notification logic at the level of a markdown checklist rather than a learned model of attention. Each commitment that earned the project its 366,000 stars is also a constraint on what it can become next. The architecture is not just descriptive of OpenClaw today. It is causal of what OpenClaw is permitted to become.

The next year will not settle the question. It will start to show whether the foundation governance holds, whether the security architecture matures, whether the OpenAI sponsorship continues without compromising independence, and whether the open layer accumulates enough specific advantages over closed alternatives to be the obvious choice for a developer building a personal agent. What can be said now is that OpenClaw exists, the open layer has at least one viable instance of itself in the world, and a foundation backed by a major AI lab is sponsoring its independence. None of those was guaranteed twelve months ago. None of them is guaranteed twelve months from now.

The lobster is not finished molting.

This is the first article in The Making of, a Robonaissance series exploring how AI and robotics systems came to be what they are, and what they are still becoming.