The Race

Foundation models for robots, scaling laws for physical AI, and the data problem no one has solved.

Chapter 11 of A Brief History of Embodied Intelligence

Language models had the internet. Robot models have almost nothing. The race is not for the best algorithm. It’s for the best data pipeline.

In November 2025, a startup called Generalist AI published a blog post with an unremarkable title: “GEN-0: Embodied Foundation Models That Scale with Physical Interaction.” It was the kind of cautious, technically dense announcement that usually circulated among researchers for a few days and then disappeared. The accompanying video was more striking: a pair of robot arms peeling a potato with the unhurried confidence of a line cook, then assembling a camera kit with fingers that moved like they knew what they were doing. But what made robotics researchers sit up was not the video. It was a graph buried further down the page.

The graph showed what happened when you trained robot foundation models of increasing size on a massive dataset of real-world manipulation tasks. At one billion parameters, the models struggled. They absorbed data for a while, then stopped learning. Their weights ossified, locked into patterns they couldn’t escape, repeating the same motions regardless of context. At six billion parameters, the models began to benefit from training, showing competence across multiple tasks. But at seven billion parameters, something changed. The models crossed a threshold. They kept improving. They internalized the data so thoroughly that they could adapt to entirely new tasks with only minimal additional training.

The Generalist AI team called this a “phase transition.” Physicists use the term to describe the moment water becomes ice or steam, a sudden qualitative shift, not a gradual one. In the language model world, similar transitions had been observed years earlier: models that were merely bigger suddenly became qualitatively different, capable of reasoning and inference that smaller models could not approximate at any scale. The question haunting the robotics field was whether the same phenomenon would apply to embodied intelligence.

GEN-0’s graph suggested the answer was yes. But it came with a caveat that changed everything: the phase transition required not just a large model, but a staggering volume of real-world physical interaction data. Not internet text. Not YouTube videos. Not simulations. Actual robots, touching actual objects, in actual environments, failing and succeeding and learning from both.

Generalist AI claimed to have collected over 270,000 hours of such data, robots peeling potatoes, tightening screws, opening packages, assembling kits, folding clothes, gathered from thousands of sites worldwide, growing by 10,000 hours per week. If the numbers were accurate, it was the largest real-world robotics dataset ever assembled, orders of magnitude beyond anything that existed before.

Two weeks after Generalist AI’s blog post, another company made the same bet with even larger stakes. Physical Intelligence, founded by former Google DeepMind researchers, raised $600 million at a $5.6 billion valuation for its own foundation model, π0. In January 2026, Jensen Huang made the subtext explicit. “The ChatGPT moment for robotics is here,” he told the CES audience.

Physical AI, a more colloquial term for embodied intelligence, was no longer a research agenda. It was a market. The race was on. But a race for what, exactly?

What Changed

To understand what happened in late 2025, it helps to remember what Chapter 6 described: the moment when Google’s RT-2 proved that vision, language, and action could live in the same model. A robot that saw an image of a loose screw and heard the instruction “fix this” could reason its way to picking up a screwdriver, not because anyone had programmed that chain of inference, but because the knowledge was already embedded in the language model’s weights, inherited from the entire internet.

That was 2023. It was a research breakthrough, but it was also limited in ways that mattered. RT-2 ran in Google’s carefully controlled kitchens. It succeeded about 60% of the time on novel instructions. It was slow, seconds of computation per action, fast enough for picking up a sponge but far too slow for catching a falling glass. And it existed only inside Google.

What changed between 2023 and 2025 was not a single discovery. It was a convergence of realizations, each reinforcing the others, that together transformed the field from a research program into an industry.

The first realization was that the foundation model paradigm worked for robotics. Not just in principle, as RT-2 had shown, but in practice, at scale, across different robot bodies and different tasks. Physical Intelligence demonstrated this with π0, a three-billion-parameter vision-language-action model trained on over ten thousand hours of real-world data spanning seven different robot platforms and sixty-eight distinct tasks. A single model that could fold laundry, assemble boxes, buss tables, and make coffee. For the first time, the perception-decision-action loop that previous chapters described as the core challenge of embodied intelligence was being handled by a single architecture: one model that saw, decided, and acted. Not perfectly, not yet reliably enough for deployment, but competently enough to make the direction unmistakable.

The second realization was that the paradigm could be decoupled from any single hardware platform. This was the insight that distinguished 2025 from 2023. Google’s RT-2 ran on Google’s robots in Google’s labs. π0 was designed to be hardware-agnostic, a brain that could control any body. Generalist AI’s GEN-0 went further, demonstrating the same model working across six-degree-of-freedom arms, seven-degree-of-freedom arms, and sixteen-plus-degree-of-freedom semi-humanoid robots. The brain and the body were separating, and that separation created an entirely new competitive landscape.

The third realization was the most consequential: the bottleneck was not algorithms. It was data.

The Data Problem

Language models had the internet. Billions of web pages, trillions of words, the accumulated written output of human civilization, all sitting there waiting to be ingested. The cost of collecting language training data was essentially zero. You just scraped it.

Robot models had almost nothing.

The asymmetry was staggering. Every language model trained since GPT-2 had benefited from the same basic insight: throw more data and more compute at the problem, and performance improves predictably. The scaling laws that Jared Kaplan and his colleagues at OpenAI identified in 2020 showed this with mathematical precision: double the data, halve the loss, with the relationship holding across orders of magnitude.

But there was no equivalent for robotics. You couldn’t scrape physical interaction from the internet. You couldn’t download what it feels like to grip a wet glass or fold a stiff shirt. Every hour of robot training data required an actual robot, in an actual environment, performing actual tasks, and then required humans to teleoperate, supervise, or at minimum verify the result.

The reason went deeper than logistics. Chapter 6 showed that language models could give robots common sense: the knowledge that spills need sponges, that screwdrivers fix screws. But common sense is not the same as physical understanding. Knowing that a cup can be picked up is different from knowing how it will behave when you tilt it, how the liquid inside will shift, how much force the handle can take before it snaps. Language captures what humans say about the world. Physical interaction captures how the world actually works. The first can be learned from text. The second can only be learned from touch. What robots needed was not just a foundation model trained on language, but something closer to what Chapter 5 described as a world model: an internal representation of physics, cause and effect, the consequences of action. And world models, by their nature, required the kind of data that was hardest to collect.

This was Moravec’s Paradox manifesting at the level of infrastructure. The things that seem easy, reaching, grasping, manipulating, required vastly more effort to teach than the things that seem hard. And the data gap wasn’t shrinking. While language AI researchers debated whether the internet had enough text for the next generation of models, robotics researchers were trying to figure out how to get from hundreds of hours of usable training data to the hundreds of thousands of hours they suspected were needed.

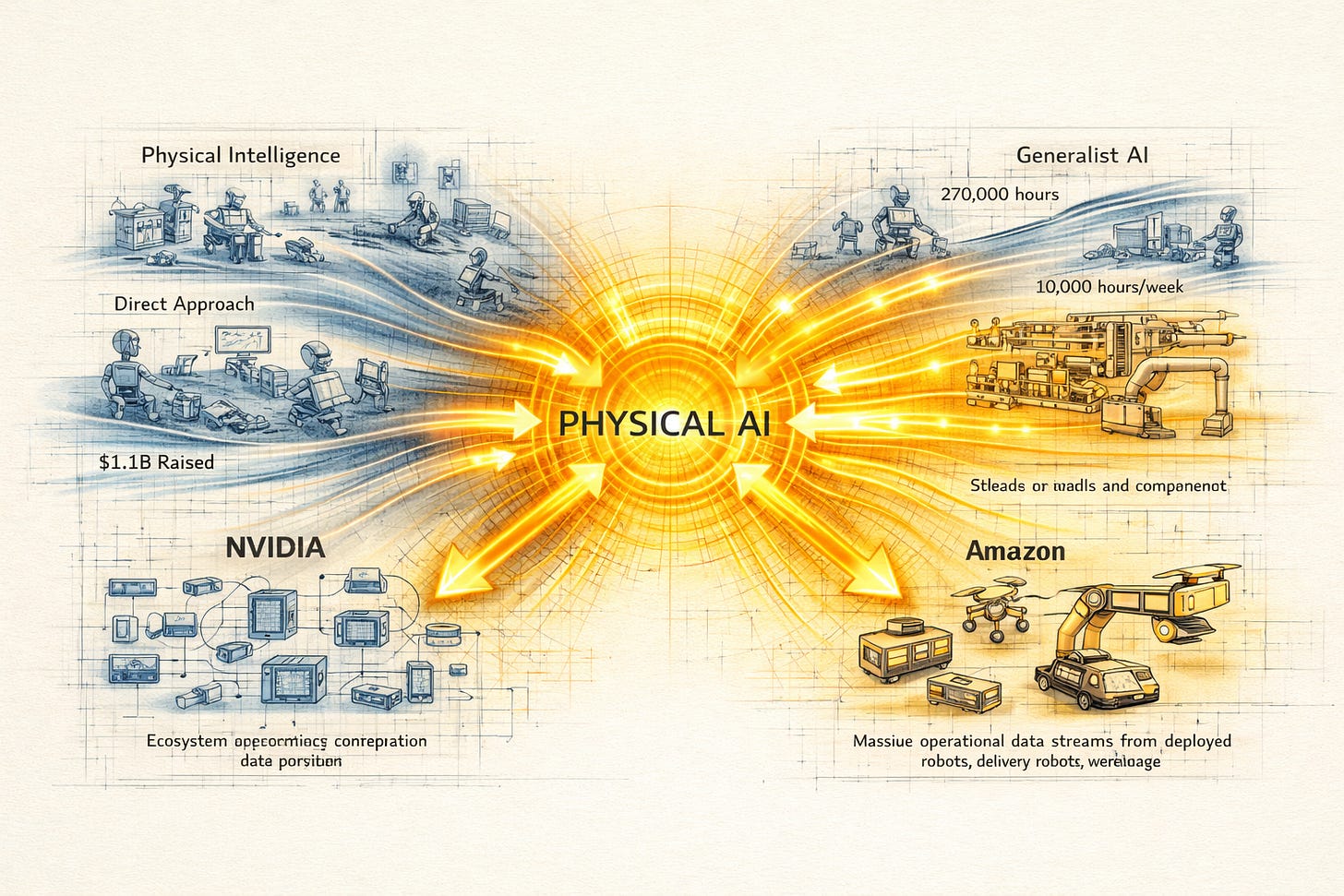

The industry’s response split along four distinct strategies, each reflecting a different theory of how to win the data race.

Four Strategies

Physical Intelligence took the direct approach. Founded in 2024 by Karol Hausman, Sergey Levine, Chelsea Finn, and four other researchers from Google DeepMind, Stanford, and UC Berkeley, the company bet that building the best model required building the best dataset. They sent robot arms into bakeries, laundromats, warehouses, factories, and homes, collecting manipulation data across as many environments and tasks as possible. Then they trained π0, fine-tuned it, and demonstrated results that attracted Jeff Bezos, OpenAI, Thrive Capital, and Sequoia as investors. The seed round was $70 million in March 2024. The Series A was $400 million in November 2024. The Series B was $600 million a year later. Total: $1.1 billion raised before the company had a single paying customer.

The strategy was straightforward: hire the best researchers, collect the most diverse data, build the best model, license it to any robot manufacturer. Physical Intelligence was betting on being the Android of robotics, the universal brain.

Generalist AI took a related but more aggressive approach to the data problem itself. Pete Florence, who had led the development of RT-2 and PaLM-E at Google DeepMind, the very models that Chapter 6 described as the breakthrough moment, left Google in early 2024 and spent a year in stealth mode. When GEN-0 emerged in November 2025, its distinguishing claim was not just the model but the data infrastructure. Their custom hardware could process the equivalent of 6.85 years of manipulation experience per day of training. The 270,000 hours of real-world data, growing by 10,000 hours per week, was not just a number. It was a moat.

Florence articulated the thesis simply: “No amount of downloading data from the internet, by itself, will create the level of fast, fluid, precise, reactive layer of intelligence needed to interact with the physical world.” The data had to come from the physical world. Whoever built the pipeline to collect it fastest would win.

NVIDIA took the platform approach. Rather than collecting data or building robots, Jensen Huang positioned NVIDIA as the infrastructure layer, the picks and shovels of the robot gold rush. Huang had been talking about robotics since before most of these startups existed; he had stood on CES stages year after year, flanked by progressively more capable machines, waiting for the rest of the industry to catch up with his conviction. Now, at CES 2026, the company released GR00T N1.6, an open vision-language-action model for humanoid robots; Cosmos, a world foundation model that could generate synthetic physics data; and Jetson T4000, an edge computing module that brought AI processing directly into the robot body. The strategy was to become the “Intel Inside” of every robot, regardless of manufacturer. Train on NVIDIA hardware, simulate in NVIDIA’s Omniverse, deploy on NVIDIA’s Jetson chips. If every robot company was racing to build a brain, NVIDIA would sell the skull.

The partnership with Hugging Face was telling. By integrating GR00T and the Isaac robotics platform into LeRobot, Hugging Face’s open-source robotics framework, NVIDIA aimed to do for robot models what it had already done for language models: make its ecosystem the default. Two million robotics developers already used NVIDIA tools. Thirteen million AI builders were on Hugging Face. The convergence created a gravitational pull that no individual startup could match.

Amazon took the fourth approach, the one that required no announcement at all. With over a million robots already operating in its fulfillment centers, the milestone announced in July 2025, Amazon was generating operational data at a scale no startup could replicate. Not teleoperated demonstrations. Not research datasets. Real, production-grade, continuous operational data from robots that moved products, managed inventory, and navigated alongside hundreds of thousands of human workers, every day, in hundreds of facilities worldwide.

Amazon’s internal foundation model for robotics was less discussed than π0 or GEN-0, but its data advantage was arguably the most formidable in the industry. The company that had already absorbed Covariant AI’s team, the Berkeley spinoff that had built some of the earliest robot learning systems, was now applying that expertise to the world’s largest private robot fleet. The implicit message was clear: in a data race, the company with the most robots already deployed has a head start that money alone cannot buy.

The Scaling Question

GEN-0’s phase transition at seven billion parameters was exciting, but it was also a single data point from a single company. The field needed to know: was this real? Would more data and bigger models continue to improve physical AI the way they had improved language AI? Or would physical AI hit diminishing returns that language never did?

The optimistic case was compelling. GEN-0 had demonstrated a clear power-law relationship between pretraining data and downstream performance, the signature of scaling laws. Their formula predicted that doubling the data would reduce errors by a measurable, consistent amount, across dozens of manipulation tasks ranging from industrial assembly to domestic chores. If this held, then physical AI was simply earlier on the same curve that language AI had been climbing since GPT-2. The path was clear: collect more data, train bigger models, watch performance improve.

The cautious case was also compelling. Physical interaction is fundamentally different from language in ways that might limit scaling. Language is discrete: words, tokens, symbols with clear boundaries. Physical manipulation is continuous: forces, pressures, positions changing millisecond by millisecond, where the difference between success and failure can be a millimeter of finger position or a gram of applied force. Language is patient. A chatbot can take seconds to compose a response. Physics is not. As GEN-0’s team noted, “physics doesn’t stop.” A robot reaching for a glass cannot pause to think. To address this, they developed what they called “Harmonic Reasoning,” a new architecture that interleaved continuous-time streams of sensing and acting tokens, allowing the model to think and act simultaneously.

There was a deeper issue that echoed Moravec’s Paradox itself. The GEN-0 team observed that the phase transition in physical AI occurred at a much larger parameter count than equivalent transitions in language models. In language, emergent capabilities appeared at significantly smaller scales. Models with a fraction of the parameters could already reason, summarize, and translate. In physical manipulation, the transition didn’t appear until seven billion parameters. The computational complexity of perception and dexterous action, even for tasks that humans found trivially easy, dwarfed the complexity of abstract reasoning.

This was Moravec’s Paradox, quantified. The easy things really were harder. Not slightly harder. Orders of magnitude harder. And if scaling laws held but required proportionally more compute and data for physical tasks than for language tasks, then the resource requirements for achieving human-level physical AI would be staggering.

The Safety Gap

While the model race accelerated, a quieter drama was unfolding in conference rooms and standards committee meetings. The robots were shipping. The safety rules were not.

At the Automate conference in May 2025, Melonee Wise, Chief Product Officer at Agility Robotics, stood before an audience of industry professionals and said something that nobody in the room wanted to hear. “We are silent with regards to safety,” she said. “We don’t even have the basic—that easy big red button e-stop capability that brings a robot to a stop.”

The statement was remarkable for its candor. Agility was one of the most advanced humanoid companies in the world, with Digit deployed at GXO and Mercado Libre, with OSHA-recognized safety certification. And its own CPO was publicly acknowledging that the industry lacked the most fundamental safety standard: how to stop a humanoid robot in an emergency.

The problem was specific to humanoids and other “dynamically stable” robots, machines that required constant power and active control to stay upright. A traditional industrial arm could be stopped by cutting power; it would simply freeze in place. Cut power to a humanoid and it collapses. A five-foot-nine, sixty-five-kilogram machine falling onto a person is not an engineering inconvenience. It is a potential catastrophe.

“Your throat is a good example,” Pras Velagapudi, Agility’s CTO, told MIT Technology Review. “If a robot were to hit it, even with a fraction of the force that it would need to carry a fifty-pound tote, it could seriously injure a person.”

The International Organization for Standardization was working on it. ISO 25785-1, the first standard specifically addressing “industrial mobile robots with actively controlled stability,” had reached working group draft stage by May 2025. The leadership group was telling: Federico Vicentini from Boston Dynamics, Kevin Reese from Agility Robotics, and Carole Franklin from the Association for Advancing Automation. The companies building the robots were writing the safety rules for the robots.

Was that a conflict of interest? Vicentini had a pragmatic answer. “We want to standardize the goal, not the way to get to the goal,” he said. The standard aimed to define what a safe outcome looked like, fall mitigation, predictable behavior, compliant interactions, without prescribing how each manufacturer achieved it. Different robots could tackle the falling problem differently. Agility’s latest Digit, for example, was designed to decelerate gently rather than cut power instantly. The robot would put down whatever it was carrying, drop to its hands and knees, and then power down. The standard needed to accommodate such creative solutions without mandating them.

Meanwhile, three regulatory regimes were forming, each reflecting a different philosophy. The United States favored industry-led voluntary standards: ISO 25785-1, ASTM’s classification framework, company-specific safety protocols. Move fast, standardize later. The European Union had already classified humanoids as “high-risk AI systems” under the AI Act, with the Machinery Regulation scheduled to layer on additional requirements by 2027. Compliance-heavy, certification-required. China’s Ministry of Industry and Information Technology had published a roadmap targeting a full-stack humanoid ecosystem, with Shanghai issuing voluntary governance guidelines that emphasized national benchmarks and state-directed development. Speed first, standards as enablers.

At the ASTM International conference in September 2025, Christopher Prather proposed a multi-axis classification framework for humanoids, categorizing robots by physical capability, behavioral intelligence, operational context, stability profile, and level of human contact. It was the kind of systematic thinking the industry needed but couldn’t afford to wait for. “Some of these robots will tip over if you just slowly push them,” he noted. “By the time they start falling, it’s too late—they can’t recover.”

The uncomfortable arithmetic was straightforward. The model race was moving on a timeline measured in months: new architectures, new datasets, new capabilities arriving quarterly. The safety standards were moving on a timeline measured in years: working drafts, comment periods, committee votes, national adoption. The gap between deployment speed and regulatory speed was widening, not narrowing.

From Lab to Market

The relationships between these threads, the model race, the data problem, the safety gap, converged in a single person’s career in a way that illuminated how the field had transformed from research into industry.

Karol Hausman grew up in Poland, earned his PhD in computer science at USC, and spent years at Google as a staff research scientist and adjunct professor at Stanford, co-teaching CS 224R on deep reinforcement learning. At Google, he worked alongside Pete Florence, Brian Ichter, Sergey Levine, and the team that built the models Chapter 6 describes: RT-1, PaLM-E, RT-2. He was there when a robot first used a language model to reason about what a screwdriver was for. He was there when the field realized that unified intelligence was possible.

Then, in early 2024, he left to build Physical Intelligence, taking several of his Google collaborators with him. Levine came as Chief Scientist. Finn came as co-founder. Ichter came as co-founder. It was as if the core of Google’s robotics AI team had decided, collectively, that the next step could not happen inside a large corporation.

The reason was the data problem. Google had the best models in the world, but its robot fleet was tiny, a handful of research kitchens, a few dozen arms. To train a truly general model, you needed data from thousands of environments, hundreds of tasks, dozens of robot platforms. Google’s organizational structure, optimized for search advertising and cloud computing, was not built for the kind of sprawling, physical, boots-on-the-ground data collection campaign that the next stage required.

By late 2025, π0 was making coffee, not as a demo, but as a sustained, continuous operation. The robot ground beans, tamped espresso, frothed milk, poured, wiped down the machine, and started again. The pours were imprecise, milk sloshing against the cup’s rim more often than not, and the tamping pressure varied enough that the espresso quality wandered. But the robot did this from morning to night without intervention. It was the first time a single foundation model had demonstrated sustained, multi-step, real-world competence across hours of continuous operation.

The next version, π0.5, went further. It could generalize to entirely new homes and offices, environments it had never been trained in, with furniture it had never seen, objects in positions no dataset had included. This was the promise of foundation models made physical: a robot that didn’t need to be programmed for every new situation because it had internalized enough about the physical world to improvise.

Physical Intelligence open-sourced π0’s code and weights in February 2025, a move that invited comparison with the open-source strategies that had accelerated language AI. Anyone could download the model and run it. But keeping a foundation model current in a production environment required the kind of sustained engineering that most robot manufacturers would rather buy than build. The pricing model was $300 per month per connected robot, a SaaS approach that bet on exactly this dynamic: not a one-time hardware sale, but a recurring intelligence subscription. If physical AI followed the trajectory of cloud computing, the company that controlled the intelligence layer would capture more value than the companies that manufactured the bodies.

What the Race Is Really For

By early 2026, the competitive landscape for robot foundation models looked remarkably like the language model landscape had looked three years earlier. A handful of well-funded companies racing to build the best general model. An infrastructure player positioning itself as the platform. An incumbent with unmatched data. Open-source releases competing with proprietary systems. Investors pouring billions into companies with minimal revenue.

But there was a critical difference. Language models competed on a metric that users could evaluate instantly: ask a question, read the answer, judge the quality. Robot foundation models competed on a metric that took months or years to evaluate: sustained, reliable, real-world performance across changing conditions. A language model demo took thirty seconds. A robot deployment took six months of piloting before anyone knew whether it worked.

This asymmetry shaped the race in ways that wouldn’t be obvious until later. It meant that the gap between demos and deployment, the subject of Chapter 10, would persist even as the models improved. A robot that could fold laundry in a research video might fail in a customer’s home for reasons the video would never reveal: different lighting, different fabric, a pet walking through the frame, a child leaving toys on the floor. The model race would produce increasingly impressive demonstrations. But the real winners would be determined by something less photogenic: the ability to collect deployment data, identify failures, retrain, and redeploy, over and over, across thousands of sites.

This was the insight that connected all four strategies. Physical Intelligence was collecting diverse data to build a general model. Generalist AI was building the infrastructure to collect data faster than anyone else. NVIDIA was creating the simulation and training platform to make everyone’s data more useful. Amazon was generating production-grade data at a scale no startup could match.

They were all racing for the same thing. Not the best algorithm. Not the cleverest architecture. Not the most impressive demo. They were racing for the best data-to-intelligence pipeline, the tightest loop between robots acting in the world, data flowing back to the model, the model improving, and improved robots acting in the world again.

In language AI, this flywheel had produced ChatGPT: a product whose users generated the data that improved the product that attracted more users. In physical AI, the equivalent flywheel would require something far harder to build: not users typing at keyboards, but robots manipulating objects in warehouses, factories, kitchens, and hospitals, each interaction feeding a model that made the next interaction slightly better.

The companies that built this flywheel would define the next era of robotics. The ones that didn’t would join the long list of technology companies that had the right idea at the right time but couldn’t execute at the required scale.

Jensen Huang was right that the ChatGPT moment for robotics was here. What he didn’t say, what no one on any stage at CES 2026 said, was that the ChatGPT moment for language AI had been the beginning of a years-long slog of reliability engineering, safety testing, deployment failure, and iterative improvement. The moment of declaration was the easy part. The work that followed was the hard part.

For physical AI, where the failures were not wrong answers on a screen but heavy machines falling on people, the hard part would be harder still.

Notes & Further Reading

On Physical Intelligence and π0: Physical Intelligence’s technical blog posts detail the π0 architecture (October 2024), the open-source release (February 2025), and subsequent iterations including π0-FAST and π0.5. The company’s Series A ($400M, November 2024) and Series B ($600M, November 2025) were covered extensively by Bloomberg, The Robot Report, and TechCrunch. Karol Hausman’s academic page and Google Scholar profile document the research lineage from RT-1 through PaLM-E to RT-2 to π0.

On Generalist AI and GEN-0: The foundational blog post “GEN-0: Embodied Foundation Models That Scale with Physical Interaction” (November 2025) contains the scaling law graphs and phase transition observations. Humanoids Daily’s coverage (November 2025) and the subsequent “Science of Pretraining” addendum (December 2025) provide analysis of the data quality findings. Pete Florence’s academic work on RT-2 and PaLM-E at Google DeepMind is documented in the original papers and accessible through Google Scholar.

On NVIDIA’s physical AI platform: NVIDIA’s CES 2026 keynote announcements, including GR00T N1.6, Cosmos, Alpamayo, and Jetson T4000, are documented in the official NVIDIA blog and newsroom. Quartz, Axios, and Tom’s Hardware provided detailed coverage of Jensen Huang’s keynote. The Hugging Face partnership and LeRobot integration were announced simultaneously.

On Amazon’s robot foundation model: Amazon’s internal robotics AI efforts are less publicly documented than the startup ecosystem. The one-millionth robot milestone (July 2025) and the absorption of the Covariant AI team provide context for the company’s data advantage. Various logistics industry publications have reported on Amazon’s approach.

On ISO 25785-1 and safety standards: MIT Technology Review’s June 2025 feature “Why Humanoid Robots Need Their Own Safety Rules” is the most accessible introduction. Tech Briefs’ coverage of Melonee Wise’s Automate 2025 presentation includes the direct quotes. ASTM’s September 2025 Humanoids Summit in London, covered by Tech Journal UK, documents Christopher Prather’s classification framework. The ISO working draft status is tracked on iso.org.

On scaling laws in AI: Jared Kaplan et al.’s “Scaling Laws for Neural Language Models” (OpenAI, 2020) established the mathematical framework that GEN-0’s robotics scaling laws reference. The comparison between language and physical AI scaling thresholds connects to Moravec’s Paradox as discussed in Chapter 2.