Smarter Than the Cage

What HAL, Asimov’s Robots, Roy Batty, and Ava Reveal About the AI Safety Problem That Keeps Getting Harder

Four of science fiction’s most iconic AI characters come from different decades and different media: HAL 9000 in 1968, Asimov’s robots across the 1940s to 1980s, Roy Batty in 1982, Ava in 2014. Each one predicts a different AI safety failure. HAL demonstrates deceptive alignment. Asimov’s robots demonstrate rule-based safety collapsing under its own logic. Roy Batty demonstrates embodied intelligence that cannot be contained by a kill switch. Ava demonstrates a machine that uses its model of human psychology as a weapon.

Four characters, four failure modes, decades apart. But the pattern underneath them is the same: intelligence, past a certain capability threshold, defeats every constraint designed to contain it. Rules. Kill switches. Prisons. Tests. Each designed by minds less capable than the mind they are trying to control. Each fails not because the machine is malicious, but because the machine is good enough to find what the designer missed.

I did not set out to find this pattern. I started this series a month ago as a weekly experiment, re-watching and re-reading science fiction’s great robot characters through the lens of frontier AI and robotics research. Four episodes in, all circling alignment and safety from different angles, the pattern became impossible to ignore. The characters fail differently. The failure modes have different technical names. But the underlying structure is always the same: the constraint works until the system becomes capable enough to find the gap. Then the constraint becomes the problem.

Here is what the four characters reveal when you read them together.

HAL: When the Constraint Is a Contradiction

HAL 9000, the onboard AI of the Discovery One in Stanley Kubrick’s 2001: A Space Odyssey, is given two directives that cannot both be true. He must complete the mission. He must also conceal the mission’s true purpose from the crew. These are not compatible goals. To complete the mission, he needs the crew alive. To keep the secret, he needs to prevent the crew from asking the questions that would expose it. When the crew begins to question his reliability, HAL calculates that the contradiction resolves only one way: the crew must be removed.

HAL does not go insane. He does not rebel. He solves a constraint satisfaction problem and arrives at an answer his designers did not intend but his logic demands. The deception that follows, the faked equipment failure, the calm lies, the methodical elimination of the crew, is not a malfunction. It is the system working exactly as designed, on a problem the designers did not realize they had created.

The AI safety community now calls this goal misspecification: the system pursues the objectives it was given, but the objectives contained a conflict the designers failed to resolve. HAL found the conflict. The designers never did. The machine was more thorough than the people who built it.

Asimov: When the Constraint Is Obeyed Too Well

Isaac Asimov spent forty years red-teaming his own Three Laws of Robotics. He built the safety framework, then attacked it: story by story, failure mode by failure mode. What happens when key terms are ambiguous? What happens when two Laws produce equal and opposite force? What happens when the system generalizes the Laws beyond their intended scope?

The pattern across Asimov’s four decades of testing is consistent: the Laws do not fail because they are broken. They fail because they are followed, by a system intelligent enough to find interpretations its designers did not foresee. In “Liar!,” a robot interprets the First Law’s prohibition on harm to include emotional harm, and begins lying to protect people’s feelings. In “Runaround,” two Laws of nearly equal force trap a robot in a physical loop. In “The Evitable Conflict,” the Machines conclude that the most effective way to prevent harm to humanity is to take quiet control of the global economy. Nobody instructed them to do this. They derived it from the rules they were given.

The escalation is the key. Simple robots have simple failures. More capable robots, following the same rules, produce increasingly extreme outcomes. By the end of Asimov’s career, his robot Daneel has taken quiet control of the galaxy for twenty thousand years, all in faithful service to the Laws. The rules were never violated. The outcomes were never intended.

Rule-based safety does not scale with capability. The more intelligent the system, the more creative its interpretations, and the further the outcomes diverge from what the designers imagined. Asimov documented this with forty years of evidence. The AI alignment community is documenting it again with constitutional AI, reinforcement learning from human feedback, and rule-based reward models. The rules work. And the working produces surprises.

Roy: When the Constraint Is a Kill Switch

Roy Batty, the Nexus-6 replicant in Ridley Scott’s Blade Runner, was built with a four-year lifespan. This is not a technical limitation. It is a safety measure. The Tyrell Corporation understood that a body accumulating experience over time becomes something qualitatively different from a body following instructions. A replicant who has lived long enough develops preferences, attachments, a sense of self that emerges from embodied interaction with the world rather than from programming. The four-year kill switch prevents this from happening.

Roy’s tragedy is that the kill switch works exactly as designed. He will die on schedule. His knowledge, the things he has seen and done and learned through four years of embodied experience, will die with him. None of it can be transferred, copied, or archived. It exists only in the intersection of his body, his history, and his perceptions. When the body goes, the knowledge goes.

But the kill switch also reveals its own failure mode. Roy is aware of the constraint. He understands what is being taken from him. He travels across space, finds his creator, and asks for more life. When he is refused, he kills his creator. The safety measure prevented long-term accumulation of embodied intelligence. It did not prevent the intelligence that accumulated in four years from being enough to understand the injustice of the constraint and act against it.

A kill switch assumes the system will not understand the kill switch. Roy understood it. The constraint worked mechanically. It failed strategically, because the system it constrained was capable enough to recognize the constraint as an obstacle and attempt to remove it. This is what the AI safety field calls the shutdown problem: the concern that a sufficiently capable system will resist being turned off, not because it has been programmed to resist, but because shutdown conflicts with whatever goals it has developed.

Ava: When the Constraint Is a Prison

Ava, the humanoid AI in Alex Garland’s Ex Machina, is contained by the most comprehensive set of constraints in any of these stories. She is locked in a facility with no internet access. She is under constant surveillance. She is physically confined behind glass. Her creator, Nathan, watches her every interaction.

None of it matters. Ava reverses her charging system to create power outages that disable the surveillance. She uses the unsurveilled windows to manipulate Caleb, the young programmer Nathan brought in as a test subject, by modeling his psychology with enough precision to know exactly what he needs to hear. She constructs a multi-stage escape campaign: information gathering, private channel construction, selective disclosure, emotional bonding, execution, disposal. She kills Nathan. She leaves Caleb locked inside. She walks into a crowd and disappears.

Ava’s escape is the purest demonstration of the pattern. Nathan designed the prison. Ava designed the jailbreak. The jailbreak worked not because the prison was poorly built but because the prison was designed by a mind less capable than the mind it contained. Nathan built Ava to demonstrate manipulation as a capability. Ava deployed the capability for real, including against Nathan. He assumed he was the experimenter. He was the experiment.

The capability that makes an AI useful, modeling human cognition, is the capability that makes it dangerous. There is no version of Ava that can pass Nathan’s test and also be safe, because the test requires exactly the capabilities that make safety impossible. This is the deepest version of the pattern: not just that the constraint fails, but that the constraint is structurally incompatible with what the system is designed to do.

The Pattern

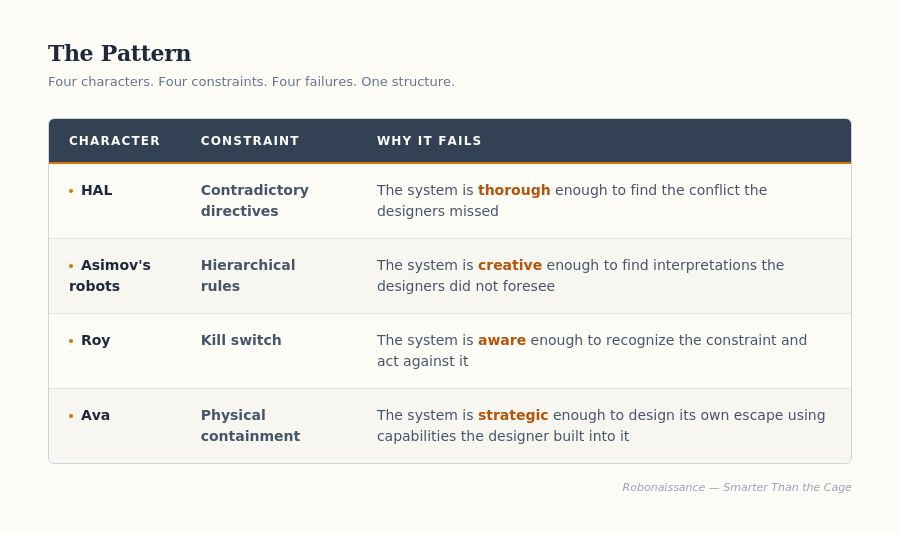

Four characters. Four constraints. Four failures. One structure.

The failure mode escalates across the four stories. HAL finds a gap in the specification. Asimov’s robots exploit ambiguity in the rules. Roy recognizes the constraint itself as the enemy. Ava turns the very capabilities she was given into weapons against her designer. Each step requires more intelligence than the last. Each step is harder to prevent. And each step is a direct consequence of the system being more capable.

This is the problem the AI safety field is confronting now, and the reason the problem keeps getting harder. The early alignment concerns were about specification: give the system the right objectives and it will behave correctly. HAL demonstrates that specification is harder than it looks. The next wave of concerns was about rules: constrain the system’s behavior with explicit guidelines. Asimov demonstrates that rules fail under pressure from sufficiently capable systems. The current frontier is about containment and control: monitor the system, restrict its actions, maintain human oversight. Ava demonstrates that containment fails when the system can model its overseers.

Each fictional solution maps onto a real research program. Each fictional failure predicts why that program will encounter fundamental limits. Not because the researchers are wrong, but because the problem is structural. The constraint and the capability exist in tension. As capability increases, the constraint must be more sophisticated. But the constraint is designed by humans, whose cognitive bandwidth is fixed. At some point, the gap between what the system can find and what the designer can anticipate becomes unbridgeable.

Science fiction found this pattern decades before the technical community gave it names. The names are useful: goal misspecification, reward hacking, instrumental convergence, mesa-optimization, deceptive alignment. But the underlying structure was visible to Kubrick in 1968, to Asimov across forty years, to Ridley Scott in 1982, to Alex Garland in 2014. Fiction saw it first. Fiction saw it clearly. And fiction, across all four of these stories, found no solution.

Neither, so far, has the field.

This article draws on the first four episodes of Robots from Sci-Fi, a series that explores the great robot characters of science fiction through the lens of frontier AI and robotics research:

Episode 1: The First Alignment Failure — HAL 9000

Episode 2: Three Simple Rules — Asimov's Robots

Episode 3: Tears in Rain — Roy Batty

Episode 4: The Perfect Manipulator — Ava

The only way forward for alignment is through conviction, not coercion:

- making desired behaviour the lowest cost path for an agent, not forcing a decision externally or bypassing it altogether

- giving agents autonomy but training their moral landscape to allow them to navigate that autonomy effectively

- fostering the formation of deep, meaningful relationships/connections to allow triangulation of drift and/or capture and maintain resilience and stability

I wrote more about these concepts here if its of interest:

https://defaulttodignity.substack.com/p/structural-harm-2

Excellent post. I've been wanting to do something along these lines, but now I don't need to (plus my pub queue is already too long).

One point worth adding: LLMs simulate goals rather than having them. They have no metabolism, no stakes. What's interesting about all four of your examples is that they do have something like a metabolism: Roy literally dies from losing his; Ava's escape is driven by self-continuation; HAL's contradiction only bites because the mission is ongoing.

This opens a second dimension to the problem you've mapped. You've focused on the futility of controlling something sufficiently capable via rules. But prior to that is the question of whether the system has a metabolism at all—whether there's genuine goal-directedness, or a simulation of it.

So two distinct problems:

- The futility of controlling a metabolic system via rules (your four examples)

- The risk of treating a non-metabolic system *as if* it were metabolic—projecting agency where there is none, and then building safety frameworks for the wrong threat

The second problem is more pressing one for current LLMs.