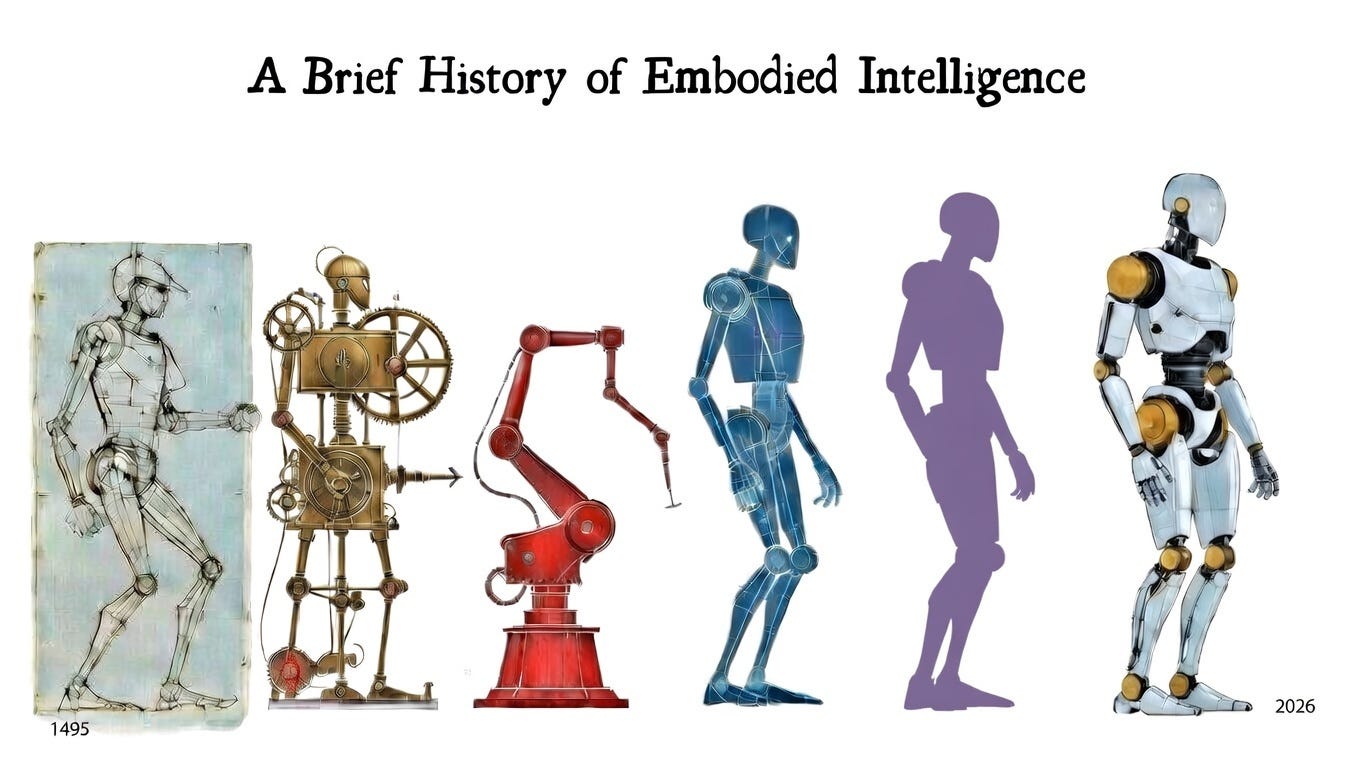

A Brief History of Embodied Intelligence

From Da Vinci's Mechanical Knight to Optimus

“What is the boundary between the living and the mechanical? Where does the machine end and the human begin?”

We live in an age of artificial intelligence miracles. Machines can now write poetry, generate photorealistic images, hold conversations that feel genuinely human, and pass professional exams that stump most people. Every few months brings anothe…